How to Scale AI Solutions Without Losing Data Privacy

Three months into scaling an AI product, a founder once told me:

"We didn’t expect privacy to become the biggest problem."

Of course they didn’t.

Everyone focuses on performance. Speed. Accuracy. Growth curves. No one plans for what happens when your AI starts touching millions of data points instead of thousands.

Let me ask you something.

What breaks first when AI scales?

Not your model. Not your infrastructure.

Your trust layer.

And once that cracks… recovery is expensive. Sometimes impossible.

What Does It Mean to Scale AI Solutions?

Scaling AI isn’t just about handling more users. That’s the surface-level definition.

The real shift?

You move from automation → decision intelligence.

At small scale:

AI helps automate repetitive tasks

At large scale:

AI starts influencing decisions

Customer experiences depend on it

Business outcomes rely on it

Example?

A chatbot handling 100 queries/day is automation. The same system handling 100,000 queries and shaping customer sentiment, is decision infrastructure.

And here’s the catch (most teams miss this):

The more valuable your AI becomes, the more sensitive the data it touches.

Why Data Privacy Becomes a Bigger Problem at Scale

Scaling multiplies everything.

Including risk.

More Users = More Data Exposure

More users means:

More personal data

More behavioral data

More integration points

Each one is a potential vulnerability.

Real Risks You Can’t Ignore

Let’s not sugarcoat this.

Data breaches → Direct financial loss + legal chaos

Regulatory penalties → Fines that hurt, not just financially

Loss of customer trust → The silent killer

I’ve seen companies recover from bugs.

I’ve rarely seen them recover from broken trust.

Common Mistakes Businesses Make While Scaling AI

This is where things usually go wrong.

And it’s rarely because of bad intentions.

1. Ignoring Data Governance

No clear policies. No ownership. Just “we’ll fix it later.”

Later never comes.

2. Using Unsecured APIs

Quick integrations. Faster launches. Hidden risks.

(Yes, I’ve seen production systems leaking data through third-party APIs.)

3. Over-Collecting User Data

More data ≠ better AI.

Sometimes, it just means more liability.

4. No Compliance Strategy

“We’ll handle compliance once we scale.”

That’s like installing brakes after buying a car.

Core Principles of AI Data Privacy

If you remember nothing else from this article, remember this section.

Data Minimization

Collect only what you actually need. Nothing more.

Transparency

Users should know what data you collect—and why.

User Consent

Not buried in legal jargon. Clear. Explicit.

Security-First Architecture

Privacy isn’t a feature you add later. It’s a foundation.

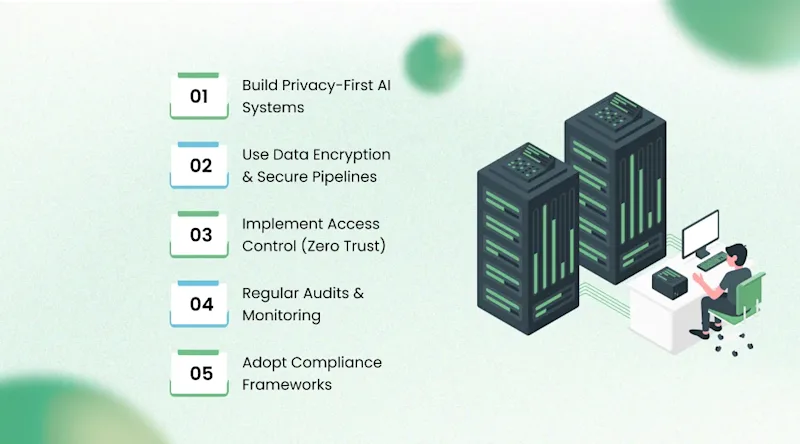

Best Practices to Scale AI Without Losing Data Privacy

This is where theory meets reality.

Build Privacy-First AI Systems

Design systems assuming data risk exists from day one.

Not after your first incident.

Use Data Encryption & Secure Pipelines

Encrypt data:

At rest

In transit

No exceptions.

Implement Access Control (Zero Trust)

Not everyone needs access to everything.

Simple idea. Rarely implemented well.

Regular Audits & Monitoring

You can’t protect what you don’t track.

Continuous monitoring > occasional checks.

Adopt Compliance Frameworks

Align with global and local regulations early.

It saves you from expensive rewrites later.

Advanced Privacy-Preserving AI Techniques

Now we get a bit technical—but stay with me.

This is where serious companies separate themselves.

Federated Learning

Data stays on user devices. Models learn without centralizing data.

Less exposure. More control.

Differential Privacy

Adds controlled noise to data.

Sounds counterintuitive. Works brilliantly.

Data Anonymization

Remove personally identifiable information.

Still useful. Much safer.

Edge AI Processing

Process data locally instead of sending everything to servers.

Faster. More private.

AI Compliance & Regulations You Must Know

Let’s address the elephant in the room.

Compliance.

GDPR (Europe)

Strict. Non-negotiable if you operate globally.

India DPDP Act

India is tightening data privacy laws.

Ignoring this? Risky.

HIPAA (Healthcare)

If you touch health data, this isn’t optional.

Here’s the part most companies misunderstand:

Compliance isn’t a burden. It’s positioning.

Customers trust companies that take privacy seriously.

That trust? It converts.

Real-World Example: Scaling AI Securely

Let me tell you about a SaaS client.

Before:

Rapid AI deployment

No clear data governance

Basic security measures

Result:

Growth plateaued

Enterprise clients hesitated

After:

We rebuilt their system with:

Encrypted pipelines

Role-based access control

Compliance-ready architecture

Result:

Enterprise deals closed faster

Customer trust increased

Revenue followed

Same AI.

Different foundation.

How AI Development Companies Ensure Data Privacy

This is where choosing the right partner matters.

Not all AI development services are equal.

Here’s what experienced teams actually do:

Secure Architecture Design

Privacy is baked into system design, not added later.

Compliance-Ready Solutions

Built to align with regulations from day one.

Ongoing Monitoring & Upgrades

Because threats evolve. Constantly.

At KriraAI, this is standard practice.

(Not because it sounds good, but because we’ve seen what happens when it’s ignored.)

If you’re evaluating a Best AI development Company, this should be your baseline—not a bonus.

Future of AI: Privacy-First Innovation

Let’s zoom out for a second.

Where is this heading?

AI Governance is Rising

Governments are paying attention.

So should you.

Ethical AI is Becoming Standard

Not optional. Expected.

Privacy as Competitive Advantage

Companies that protect data will win.

Simple.

Conclusion

Scaling AI is exciting.

But it comes with responsibility.

The companies that win won’t be the ones with the fastest models.

They’ll be the ones people trust.

Because at scale, AI data privacy isn’t technical, it’s reputational.

And reputations?

They’re hard to rebuild.

FAQs

Focus on privacy-first architecture, encryption, access control, and compliance frameworks from the beginning - not after scaling.

Because AI systems rely on sensitive data. Poor privacy can lead to breaches, legal issues, and loss of customer trust.

Data breaches, regulatory penalties, and trust loss are the biggest risks when scaling AI systems without proper safeguards.

Compliance ensures your AI systems follow legal standards, reducing risks and building credibility with customers.

Yes. Even small businesses can adopt basic practices like data minimization, encryption, and secure APIs to protect user data.

Founder & CEO

Divyang Mandani is the CEO of KriraAI, driving innovative AI and IT solutions with a focus on transformative technology, ethical AI, and impactful digital strategies for businesses worldwide.