Microsoft Agent Governance Toolkit: Runtime Security for Autonomous AI Agents

On April 2, 2026, Microsoft open-sourced the Agent Governance Toolkit under the MIT license, releasing seven independently installable packages that provide deterministic runtime security for autonomous AI agents. The toolkit is the first to address all ten risk categories identified in the OWASP Top 10 for Agentic Applications, published in December 2025 after review by more than 100 security researchers. It achieves policy enforcement at a reported p99 latency below 0.1 milliseconds, meaning governance decisions happen faster than a single LLM token generation step. For organisations deploying AI agents that call APIs, execute code, manage infrastructure, and interact with production systems, this release represents a structural shift from aspirational safety to enforceable security.

The timing is not accidental. Gartner predicted that 40 percent of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5 percent in 2025. Yet a Cloud Security Alliance survey of 228 IT and security professionals published in March 2026 found that 68 percent of organisations cannot clearly distinguish between human and AI agent activity, and only 18 percent are confident their identity and access management systems can handle agent identities. The governance infrastructure for autonomous agents has simply not kept pace with the ease of building them.

Before this release, the primary defence for most agent deployments was prompt-level safety — system prompts instructing models to follow rules. According to Microsoft's documentation, prompt-based safety approaches show a 26.67 percent policy violation rate in red-team testing. The AI agent governance toolkit takes a fundamentally different approach: it governs agent actions deterministically at the execution layer, not LLM inputs or outputs. This blog examines the toolkit's architecture, maps it against the OWASP agentic AI risks, explains framework integration, and provides practical adoption guidance. At KriraAI, we have been tracking this space closely because governance infrastructure directly determines whether enterprise agentic AI deployments can move from pilot to production safely.

What the AI Agent Governance Toolkit Actually Does

The most important thing to understand about the Agent Governance Toolkit is what it is not. It is not a prompt guardrail. It is not a content moderation system. It is not an alignment technique applied during model training. It operates at a completely different layer of the stack — the execution layer where agent actions meet real-world systems.

The toolkit sits as middleware between an agent framework and every action an agent attempts to take. Every tool call, every resource access, every inter-agent message passes through a policy engine before execution. The decision flow is deterministic: an action is evaluated against policy, and it is either allowed or denied. There is no probabilistic assessment, no confidence threshold, and no retry-and-hope logic.

Microsoft's design rationale draws explicitly from three proven infrastructure patterns. Operating systems solved the problem of untrusted programs sharing resources through kernels, privilege rings, and process isolation. Service meshes solved it for microservices through mTLS and identity verification. Site reliability engineering solved it for distributed systems through SLOs and circuit breakers. The Agent Governance Toolkit applies these battle-tested paradigms to AI agents, treating them the way operating systems treat processes — as entities that must be identified, constrained, monitored, and terminated when they violate policy.

This distinction matters because LLM-level guardrails operate on text and are inherently susceptible to adversarial prompting and the non-determinism of language model outputs. Action-level governance operates on structured function calls and system interactions, where policies can be evaluated with the same deterministic logic used in firewalls and access control lists throughout enterprise infrastructure.

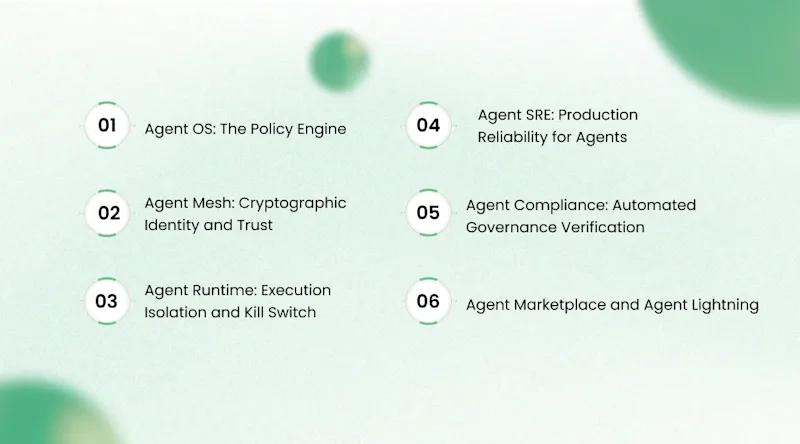

The Seven-Package Architecture Explained

The toolkit is structured as a monorepo with seven independently installable Python packages. Each package targets a distinct governance layer, and teams can adopt them incrementally based on their deployment maturity and risk profile. This modularity is a deliberate design choice — it means an organisation can start with just the policy engine and add identity, compliance, and reliability packages as their agent systems grow in complexity.

Agent OS: The Policy Engine

Agent OS is the core package and functions as a stateless policy engine. Every agent action passes through Agent OS before execution. It supports three policy languages: YAML rules for simple configurations, OPA Rego for organisations already using Open Policy Agent, and Cedar for teams working within the AWS Verified Permissions ecosystem. The engine evaluates policies at sub-millisecond latency, with Microsoft reporting a p99 below 0.1 milliseconds. This performance characteristic is critical because it means governance adds no perceptible delay to agent operations. In red-team testing, the toolkit achieved a 0.00 percent policy bypass rate, compared to the 26.67 percent bypass rate of prompt-based safety approaches.

Agent Mesh: Cryptographic Identity and Trust

Agent Mesh provides the identity layer for multi-agent systems. Each agent receives a Decentralised Identifier (DID) backed by Ed25519 cryptographic keys. Every message exchanged between agents is cryptographically signed, providing non-repudiation and tamper detection. The package implements the Inter-Agent Trust Protocol (IATP) for secure agent-to-agent communication and maintains dynamic trust scores on a 0-to-1000 scale with five behavioural tiers. Trust scores adjust based on agent behaviour over time, meaning an agent that consistently operates within policy earns higher trust, while policy violations reduce trust and can trigger automated restrictions.

Agent Runtime: Execution Isolation and Kill Switch

Agent Runtime provides the execution containment layer. It implements four dynamic privilege rings inspired by CPU privilege levels, controlling what categories of actions an agent can perform based on its current trust tier and operational context. The package includes saga orchestration for multi-step transactions, ensuring that if an agent's multi-step workflow fails partway through, compensating actions can roll back partial changes. The most operationally significant feature is the kill switch — emergency agent termination that can be triggered manually or automatically based on policy violations. In production environments where agents manage infrastructure or execute financial transactions, this capability moves from nice-to-have to essential.

Agent SRE: Production Reliability for Agents

Agent SRE applies site reliability engineering practices to agent systems — SLOs, error budgets, circuit breakers, chaos engineering, and progressive delivery. This package enables engineering teams to define operational boundaries for agent systems and automatically degrade or halt operations when those boundaries are breached. These are the same reliability patterns that have proven essential for operating microservices at scale, now applied to autonomous agents.

Agent Compliance: Automated Governance Verification

Agent Compliance automates compliance checking against regulatory frameworks including the EU AI Act, HIPAA, and SOC 2, with automated evidence collection covering all ten OWASP risk categories. The EU AI Act's high-risk obligations take effect in August 2026, and the Colorado AI Act becomes enforceable in June 2026, making automated compliance verification increasingly urgent.

Agent Marketplace and Agent Lightning

Agent Marketplace manages the lifecycle of agent plugins with Ed25519 signing and verification. Unsigned plugins are blocked at installation, directly addressing supply chain compromise risks. Agent Lightning governs reinforcement learning workflows, ensuring that policy constraints are enforced during RL training and that reward shaping does not produce policy-violating behaviour.

How Deterministic Policy Enforcement Works at Sub-Millisecond Latency

The performance characteristics of the policy engine determine whether governance is practically viable in real-time agent systems. An agent processing tool calls in sequence, each taking hundreds of milliseconds for API round trips, cannot afford a governance layer that adds seconds of latency. Agent OS achieves sub-millisecond enforcement through several architectural choices.

The engine is stateless by design. Each policy evaluation receives the complete context it needs — agent identity, requested action, target resource, and current trust score — as input. There is no database lookup, no network call to an external policy service, and no model inference in the critical path. Policy rules are compiled into an in-memory evaluation structure at startup, and each evaluation is a deterministic traversal of that structure.

This design has important deployment implications. Because the engine is stateless, it can be deployed as a sidecar alongside each agent container in Kubernetes, as middleware within an agent framework's request pipeline, or as a library linked directly into the agent application. Microsoft recommends running each agent in a separate container for OS-level isolation, with Agent OS providing application-level governance within each container. This layered approach mirrors the defence-in-depth patterns standard in enterprise security.

KriraAI's engineering teams recognise this pattern from our own work building production AI systems. The principle of making governance invisible to the developer experience while remaining absolute in enforcement separates tools that get adopted from tools that get bypassed.

Mapping the OWASP Agentic AI Risks to Toolkit Capabilities

The OWASP Top 10 for Agentic Applications provides the industry's first formal taxonomy of risks specific to autonomous AI agents. Understanding how the Agent Governance Toolkit maps to each risk category reveals both its strengths and the boundaries of what runtime governance can address.

The ten OWASP agentic AI risks and their toolkit coverage are as follows:

ASI01 — Agent Goal Hijacking: Agent OS policy rules detect and block actions that deviate from an agent's authorised objective set, preventing hijacked goals from translating into unauthorised actions.

ASI02 — Tool Misuse: Agent OS enforces tool-level permissions with parameter-level constraints on how tools are called.

ASI03 — Identity and Privilege Abuse: Agent Mesh provides cryptographic identities via DIDs and Ed25519, preventing spoofing and enabling precise privilege scoping.

ASI04 — Inadequate Guardrails on Tool Actions: Agent Runtime's privilege rings constrain action categories, while Agent OS provides parameter-level enforcement.

ASI05 — Insecure Output Handling: Agent OS intercepts and validates agent outputs before they reach downstream systems.

ASI06 — Memory Poisoning: Agent Runtime's execution isolation prevents cross-agent memory contamination.

ASI07 — Inadequate Multi-Agent Orchestration: Agent Mesh's trust scoring and IATP protocol ensure authenticated, authorised, and auditable multi-agent interactions.

ASI08 — Cascading Failures: Agent SRE's circuit breakers and error budgets prevent failure propagation across multi-agent systems.

ASI09 — Supply Chain Compromise: Agent Marketplace enforces Ed25519 signing on all plugins, blocking unsigned or tampered components.

ASI10 — Rogue Agents: Agent Runtime's kill switch provides emergency termination, while Agent Mesh's trust scoring detects behavioural drift.

This coverage is comprehensive but comes with an important caveat: the toolkit provides application-level governance, not OS kernel-level isolation. For production deployments, Microsoft recommends combining application-level governance with container-level isolation.

Framework Integration: Working With the Tools You Already Use

A multi-agent governance framework is only valuable if it works with the agent frameworks teams have already invested in. The toolkit was designed to be framework-agnostic from its initial release, integrating through each framework's native extension points.

The integration approach is architecturally clean. For LangChain, the toolkit hooks into callback handlers. For CrewAI, it uses task decorators. For Google ADK, it plugs into the plugin system. For Microsoft Agent Framework, it uses the middleware pipeline. Integrations for OpenAI Agents SDK, Haystack, LangGraph, and PydanticAI are all shipped. LlamaIndex has a TrustedAgentWorker integration, and Dify has the governance plugin available in its marketplace.

The cross-language support extends the toolkit's reach beyond Python. A TypeScript SDK is available through npm as @microsoft/agentmesh-sdk, and a .NET SDK is available on NuGet as Microsoft.AgentGovernance. SDKs in Rust and Go implement core governance capabilities for teams operating in those ecosystems. For enterprises running heterogeneous technology stacks, this means governance can be applied consistently across agents written in different languages.

At KriraAI, we see this framework-agnostic approach as essential for enterprise adoption. Our clients typically operate agent systems built on multiple frameworks, and any governance solution that requires standardising on a single framework creates adoption barriers. The toolkit's approach of meeting teams where they are — integrating through native extension points with minimal code changes — significantly reduces the gap between deciding to adopt governance and having it enforced in production.

Practical Adoption: From Installation to Production Deployment

For engineering teams evaluating the toolkit, the adoption path is incremental. Installation is a single pip command, and diagnostic tools provide immediate feedback:

For teams deploying on Azure, the toolkit supports sidecar deployment on Azure Kubernetes Service, middleware integration with Azure Foundry Agent Service, and containerised deployment alongside existing agent infrastructure. Twenty step-by-step tutorials ship with the toolkit, providing a practical on-ramp beyond documentation.

The recommended adoption sequence starts with Agent OS for policy enforcement on existing agent tool calls. This single package provides immediate value by creating an auditable record of every agent action and enabling basic policy constraints. As multi-agent scenarios emerge, Agent Mesh adds cryptographic identity and trust scoring. Agent SRE layers in production reliability practices as systems scale. Agent Compliance becomes relevant as regulatory deadlines approach.

This incremental approach aligns governance adoption with organisational maturity. A team running a single agent prototype does not need a full seven-package governance stack. A team operating dozens of agents across production infrastructure needs every layer. At KriraAI, we advise our enterprise clients to adopt governance infrastructure incrementally, starting with the policy engine and expanding as their agentic AI systems mature.

Current Limitations and What This Does Not Solve

An honest assessment of any security tool requires naming what it does not do. Microsoft's documentation is transparent about several important limitations.

The most significant limitation is that the toolkit provides application-level governance within the same trust boundary as the agent. The policy engine and the agent run in the same process, meaning a sufficiently sophisticated attack that compromises the agent process could potentially bypass governance. Microsoft's recommended mitigation is to run each agent in a separate container for OS-level isolation, using the toolkit for application-level governance within that container.

The toolkit does not address model-level safety. It does not prevent an LLM from generating harmful text, hallucinating facts, or producing biased outputs. Microsoft points to Azure AI Content Safety as a complementary tool. Teams need both layers — content safety for model outputs and action governance for agent behaviour — for a complete AI agent runtime security posture.

Performance in distributed deployments also deserves attention. While the p99 latency of sub-0.1 milliseconds applies to local policy evaluation, distributed scenarios requiring network calls to external policy stores will introduce additional latency. Teams operating agents across multiple regions should benchmark under their specific topology. Finally, the toolkit was released on April 2, 2026, and while it ships with more than 9,500 tests, it has limited production deployment history. Teams adopting today are early adopters at the frontier of agentic AI security.

Where Agentic AI Governance Goes From Here

Three takeaways matter most from this release. First, the architecture works by applying proven infrastructure patterns — kernels, privilege rings, service meshes, and SRE practices — to autonomous AI agents, achieving deterministic policy enforcement at sub-millisecond latency. Second, the toolkit matters most for enterprises moving agentic AI from pilot to production, where the governance gap has been the primary barrier to scaling. Third, engineering teams should adopt governance incrementally, starting with the policy engine and expanding as their agent systems grow.

The broader trajectory is clear. As the EU AI Act and Colorado AI Act come into force in 2026, and as the OWASP Agentic Top 10 becomes the baseline for security assessments, agentic AI security shifts from best practice to compliance requirement. Organisations that embed governance early will move faster than those treating it as a retroactive audit.

At KriraAI, we build and deploy production AI systems at the intersection of emerging research and enterprise reality. We apply new technology when it has demonstrated the engineering maturity required for production use. The AI agent governance toolkit represents exactly that threshold: infrastructure that is architecturally sound, practically deployable, and aligned with enterprise security requirements. If your organisation is building or scaling agentic AI systems, we invite you to explore what this technology could mean for your deployment with KriraAI.

FAQs

The fundamental difference is the layer at which governance operates. Prompt guardrails work at the LLM input/output layer, attempting to constrain what a model says through safety instructions or output filtering. Content moderation classifies model outputs for harmful content. The Agent Governance Toolkit operates at the action execution layer, intercepting every tool call, API request, and inter-agent message before it executes. Prompt-based approaches are inherently probabilistic — they depend on the model following instructions — while action-level governance is deterministic. A policy rule that blocks an agent from accessing a particular API endpoint will block that access regardless of what the model was prompted to do. Microsoft's testing showed a 26.67 percent policy violation rate with prompt-based safety versus a 0.00 percent bypass rate with deterministic policy enforcement, making deterministic enforcement the standard that regulatory frameworks and enterprise security teams require.

The OWASP Top 10 for Agentic Applications was published in December 2025 after peer review by more than 100 security researchers. The ten risks are: ASI01 Agent Goal Hijacking, where adversarial inputs redirect an agent's objectives; ASI02 Tool Misuse, where agents use authorised tools in unauthorised ways; ASI03 Identity and Privilege Abuse, where agents operate with excessive permissions; ASI04 Inadequate Guardrails on Tool Actions; ASI05 Insecure Output Handling; ASI06 Memory Poisoning, where persistent agent memory is contaminated; ASI07 Inadequate Multi-Agent Orchestration; ASI08 Cascading Failures, where a failure in one agent propagates through a system; ASI09 Supply Chain Compromise; and ASI10 Rogue Agents, where agents drift from intended behaviour. A Dark Reading poll found that 48 percent of cybersecurity professionals identify agentic AI as the number-one attack vector heading into 2026, yet only 34 percent of enterprises have AI-specific security controls in place.

Securing multi-agent systems requires addressing three distinct layers: agent identity, inter-agent communication, and action governance. At the identity layer, each agent needs a cryptographic identity — the toolkit uses Decentralised Identifiers with Ed25519 keys — so every action can be attributed to a specific agent. At the communication layer, every inter-agent message should be signed and verified, with trust scores that adjust dynamically based on observed behaviour. At the action layer, every tool call should pass through a deterministic policy engine. Beyond these three layers, production multi-agent systems need circuit breakers to prevent cascading failures, audit logging for post-incident investigation, and a kill switch for emergency termination. The multi-agent governance framework provided by the toolkit addresses all three layers, though teams should complement it with container-level isolation for defence in depth.

Deterministic policy enforcement means that the governance decision for any given agent action is a binary allow or deny computed from fixed rules evaluated against structured action metadata, with no randomness, no model inference, and no confidence thresholds. This contrasts with probabilistic approaches where a classifier evaluates whether an action seems safe, producing a confidence score that might vary between evaluations. Determinism matters for three reasons in enterprise contexts. First, it is auditable — regulators can review the exact rule that allowed or denied any action. Second, it is predictable — the same action under the same context always produces the same decision, enabling reliable testing. Third, it is resistant to adversarial manipulation — because no language model is in the policy evaluation path, prompt injection cannot influence governance decisions. The toolkit achieves this by compiling policy rules into an in-memory evaluation structure at startup with zero network calls or model inference in the critical path.

The toolkit integrates through each framework's native extension mechanisms rather than requiring migration. For LangChain, it uses callback handlers that intercept tool calls. For CrewAI, it uses task decorators. For Google ADK, it plugs into the plugin system. For Microsoft Agent Framework, it uses the middleware pipeline. Integrations for OpenAI Agents SDK, Haystack, LangGraph, PydanticAI, LlamaIndex, and Dify are all available. Adding governance to an existing agent typically requires installing the pip package, writing a YAML policy file, and adding a few lines of configuration — not rewriting agent logic. SDKs are available in Python, TypeScript, .NET, Rust, and Go, enabling consistent governance across agents in different languages. This framework-agnostic design is essential for enterprise environments where multiple teams use different frameworks.

Founder & CEO

Divyang Mandani is the CEO of KriraAI, driving innovative AI and IT solutions with a focus on transformative technology, ethical AI, and impactful digital strategies for businesses worldwide.