Deep Learning Services for Mid-Market Companies: A Practical Growth Guide

The global deep learning market reached approximately $48 billion in 2026 and is projected to surpass $232 billion by 2030, growing at a compound annual rate exceeding 37 percent. Yet the vast majority of that growth has been captured by either large enterprises with dedicated AI departments or nimble startups born on cloud infrastructure. Mid-market companies, those with 50 to 500 employees, account for roughly a third of private sector GDP across most economies, but fewer than 18 percent of them have moved beyond basic automation into actual deep learning deployments. This gap is not a reflection of need. It is a reflection of how poorly the deep learning services market has been packaged, priced, and communicated for companies operating at this specific scale.

If you run or lead a company with between 50 and 500 employees, you have likely seen deep learning success stories that feel irrelevant. A Fortune 500 retailer building a custom computer vision system with a team of forty ML engineers does not map to your reality. Neither does a solo freelancer plugging a pre-trained API into a weekend project. Your company sits in the most strategically important and most underserved zone in the AI adoption landscape. This blog is written specifically for you. It covers what deep learning services actually cost at your scale, which applications deliver the fastest returns for your operational profile, how to implement them without hiring a full data science team, and what the competitive landscape will look like in three to five years for companies at your size that move now versus those that wait.

The Operational Reality of a 50 to 500 Employee Company

Understanding why deep learning adoption looks different at this scale requires an honest picture of what daily operations actually feel like inside a mid-market company. You are past the stage where the founder handles everything, but you are not yet at the stage where you can build entire internal departments around a single technology initiative. Your teams are lean and cross-functional, meaning the person who manages your data warehouse might also oversee your analytics reporting, your CRM configuration, and your quarterly board dashboards.

Most mid-market companies in 2026 operate on annual technology budgets between $500,000 and $3 million, depending on the industry. That sounds substantial until you account for existing SaaS subscriptions, infrastructure maintenance, cybersecurity obligations, and the ongoing cost of keeping legacy systems connected to newer platforms. The portion available for a genuinely new initiative like deep learning is typically between $75,000 and $400,000 for the first year, including both vendor costs and internal resource allocation.

Decision making at this scale is faster than at an enterprise but carries higher personal stakes. A VP of Operations who champions a deep learning initiative and sees it stall after six months faces real career consequences, not just a line item in a quarterly review. This creates a risk aversion that is entirely rational. Mid-market leaders are not afraid of AI because they do not understand it. They are cautious because the margin for error is thinner at their scale, and every dollar and every hour of team bandwidth committed to one initiative is a dollar and an hour taken from something else. The technology stack at a typical mid-market company includes a mix of modern cloud services and older on-premise systems that were never designed for integration. You might run Salesforce for CRM, NetSuite or a similar ERP for finance, a mix of custom and off-the-shelf tools for industry specific workflows, and a data environment that ranges from reasonably organized to genuinely fragmented. This is the starting point for deep learning adoption, and any realistic strategy must account for it.

How Deep Learning Services Differ at Mid-Market Scale

What Enterprises Do That You Should Not Copy

Large enterprises with more than 5,000 employees approach deep learning by building internal AI centers of excellence, hiring teams of 20 to 100 machine learning engineers, investing in custom training infrastructure, and running multi-year transformation programs. The typical enterprise deep learning budget starts at $5 million and can exceed $50 million annually. They can afford to run ten pilot projects knowing that seven will fail, because the three that succeed will generate enough value to justify the portfolio.

Mid-market companies cannot operate this way, and attempting to replicate enterprise AI strategies is one of the most common and most expensive mistakes at this scale. You do not need an internal ML team of twenty. You do not need to train models from scratch on custom GPU clusters. You do not need a Chief AI Officer reporting to the board. What you need is a focused, outcome-driven engagement with deep learning services that solves two or three high-impact problems using a combination of pre-trained models, transfer learning, and managed infrastructure.

What Solo Operators Do That Will Not Scale for You

On the other end, solo operators and micro businesses with fewer than ten employees adopt deep learning almost exclusively through consumer-grade APIs and pre-built tools. They use services like image recognition APIs, text generation endpoints, and plug-and-play chatbot builders. These tools cost between $20 and $500 per month and require virtually no technical integration. They work well at that scale because the data volumes are small, the use cases are simple, and the stakes of an incorrect prediction are low.

For a mid-market company processing thousands of transactions daily, managing complex supply chains, or serving diverse customer segments, these consumer-grade tools break down quickly. They cannot handle your data volume efficiently, they do not integrate with your existing systems, and they offer no customization for your specific domain. The deep learning implementation cost for a mid-size business sits in a fundamentally different category, one that requires real engineering but not enterprise-level engineering.

The Mid-Market Sweet Spot

The practical approach for companies at your scale combines three elements. First, you leverage pre-trained foundation models and fine-tune them on your proprietary data rather than training from scratch. This reduces both compute costs and time to deployment by 60 to 80 percent compared to custom model development. Second, you use managed cloud infrastructure from providers like AWS, Google Cloud, or Azure rather than purchasing hardware. Third, you partner with a focused AI solutions provider like KriraAI that understands how to scope deep learning projects for mid-market budgets and timelines, rather than hiring a team of specialists you cannot fully utilize year-round. This combination gives you 80 percent of the capability of an enterprise deep learning deployment at roughly 15 to 25 percent of the cost.

The Right Deep Learning Applications for Mid-Market Companies

Not every deep learning application is equally practical at mid-market scale. The applications that deliver the strongest returns share three characteristics: they address a problem you are already spending significant money or time on, they work well with the data volumes a mid-market company generates, and they can be deployed within three to six months rather than requiring a multi-year buildout. The following applications consistently rank as the highest impact for companies in this segment.

Demand Forecasting and Predictive Analytics

Deep learning models, particularly recurrent neural networks and transformer-based architectures, excel at forecasting demand across complex product portfolios. For a mid-market manufacturer or distributor handling 500 to 5,000 SKUs, a well-tuned forecasting model typically reduces inventory carrying costs by 15 to 25 percent while simultaneously reducing stockouts by 20 to 35 percent. The deep learning implementation cost for a mid-size business deploying a managed forecasting solution ranges from $80,000 to $200,000 in the first year, including data preparation, model development, integration, and ongoing cloud compute. The return typically exceeds 3x the investment within the first twelve months through reduced overstock, fewer emergency orders, and better cash flow management.

Intelligent Document Processing

Mid-market companies are often drowning in document-based workflows. Invoices, contracts, compliance forms, customer correspondence, and technical specifications flow through the organization in formats that resist automation. Deep learning powered document processing using convolutional neural networks for layout understanding and transformer models for text extraction can automate 70 to 85 percent of document classification and data extraction tasks that currently require manual handling. A company processing 10,000 documents per month can realistically save 400 to 600 labor hours monthly, which at mid-market labor costs translates to $15,000 to $30,000 in monthly savings.

Customer Behavior Modeling

For mid-market companies with direct customer relationships, whether in B2B services, e-commerce, financial services, or SaaS, deep learning models can identify patterns in customer behavior that traditional analytics miss entirely. Neural network solutions for growing companies in this segment typically focus on three outcomes:

Churn prediction models that identify at-risk accounts 30 to 60 days before they leave, giving your team time to intervene with retention offers or relationship outreach.

Lifetime value estimation models that help your sales and marketing teams prioritize acquisition spending toward customer profiles with the highest long-term revenue potential.

Cross-sell and upsell recommendation engines that analyze purchase history, engagement patterns, and firmographic data to suggest the right offer at the right time.

These models require a minimum of 12 to 18 months of historical customer data to train effectively, but most mid-market companies already have this data sitting in their CRM and transaction systems.

Quality Control and Anomaly Detection

For mid-market manufacturers, logistics providers, and companies with physical operations, deep learning based visual inspection and anomaly detection offer some of the fastest returns available. Computer vision models trained on images of your products or processes can detect defects, deviations, and safety issues with accuracy rates exceeding 95 percent, often catching problems that human inspectors miss during high-volume shifts. Deployment costs for a single production line or inspection point typically range from $40,000 to $120,000, with ongoing compute and maintenance costs of $1,000 to $3,000 per month.

Quantified Business Impact: What the Numbers Actually Look Like at Your Scale

The challenge with most published AI ROI figures is that they come from enterprise deployments where the absolute numbers are impressive but meaningless at mid-market scale. When a company with 10,000 employees reports saving $50 million through AI, that tells you nothing about what a company with 200 employees can expect. Here is what the numbers actually look like for mid-market companies that have implemented deep learning services in the past two years.

Mid-market companies deploying deep learning for operational forecasting report an average reduction of 22 percent in planning-related costs within the first year of full deployment. For a company with $30 million in annual revenue, this translates to roughly $300,000 to $500,000 in annual savings from better inventory management, staffing optimization, and resource allocation. The deep learning ROI for mid-market firms in this category consistently exceeds 250 percent over a 24 month period when implementation costs are fully loaded.

In customer-facing applications, mid-market companies using deep learning for personalization and recommendation report revenue increases of 8 to 14 percent on the customer segments where the models are active. KriraAI has observed that mid-market clients implementing neural network based customer scoring see a median improvement of 35 percent in marketing qualified lead conversion rates within the first two quarters of deployment. These gains compound over time as the models ingest more data and refine their predictions.

Time savings are equally significant at this scale. A 200 person company that automates document processing, customer inquiry routing, and report generation through deep learning typically recovers the equivalent of 12 to 18 full-time employees' worth of labor hours annually. This does not mean eliminating 18 jobs. It means redirecting 18 roles' worth of time from repetitive processing tasks to higher-value work like customer relationship development, strategic analysis, and product innovation. For mid-market companies where every team member already wears multiple hats, this reallocation of time is often more valuable than the direct cost savings.

Deep Learning Services Implementation: A Roadmap Built for Mid-Market Constraints

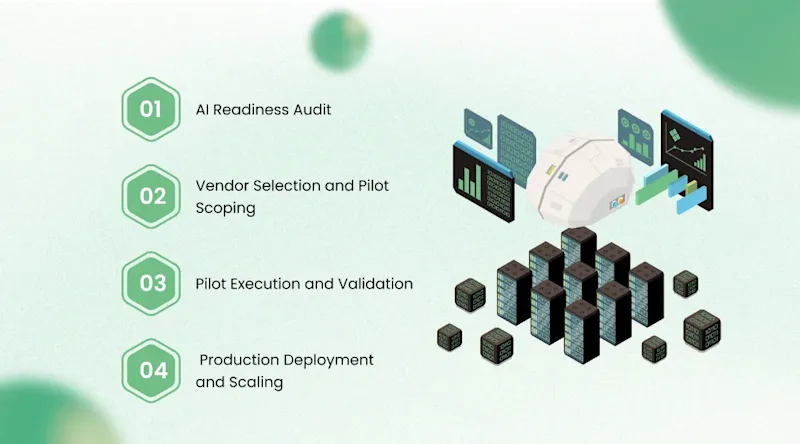

Phase 1: AI Readiness Audit (Weeks 1 to 4)

Before selecting any vendor or technology, you need an honest assessment of where your organization stands. This audit examines four dimensions.

Data readiness evaluates whether your existing data systems contain the volume, quality, and accessibility of information needed to train and deploy deep learning models for your target use cases.

Infrastructure assessment maps your current cloud services, on-premise systems, and integration architecture to identify what can support deep learning workloads and what needs upgrading.

Organizational readiness gauges whether your team has the basic data literacy and change management capacity to adopt AI-driven workflows without excessive friction.

Use case prioritization ranks your potential deep learning applications by expected ROI, implementation complexity, data requirements, and strategic alignment.

This audit can be conducted internally if you have a strong technical leader, or through a partner like KriraAI that specializes in mid-market AI readiness assessments. The cost for an external audit typically ranges from $15,000 to $40,000 and takes three to four weeks.

Phase 2: Vendor Selection and Pilot Scoping (Weeks 5 to 8)

With your audit complete, you select a deep learning services partner and scope your first pilot project. For mid-market companies, the pilot should target a single, well-defined use case with clear success metrics and a deployment timeline of 8 to 12 weeks. Avoid the temptation to pilot three things simultaneously. Your team does not have the bandwidth to manage parallel AI initiatives, and splitting focus almost always leads to three mediocre results instead of one strong one.

When evaluating vendors, prioritize partners who demonstrate three qualities. They should have documented experience with companies at your scale, not just enterprise logos. They should offer a pricing model that aligns with mid-market budgets, typically a combination of fixed project fees and usage-based compute costs rather than large annual licenses. They should provide a clear handoff plan that trains your internal team to manage the deployed solution rather than creating permanent dependency.

Phase 3: Pilot Execution and Validation (Weeks 9 to 20)

During the pilot, the vendor builds, trains, and deploys the model while your internal team provides domain expertise, data access, and feedback. A well-run pilot for a mid-market company follows a weekly cadence of progress reviews, with formal milestone checkpoints at weeks 4, 8, and 12. The validation phase at the end of the pilot compares actual model performance against the success metrics defined during scoping. This is where you make the go or no-go decision for full production deployment.

Phase 4: Production Deployment and Scaling (Weeks 21 to 36)

Full deployment involves integrating the validated model into your production workflows, training end users, establishing monitoring and retraining protocols, and planning the next use case. KriraAI recommends that mid-market companies adopt a sequential scaling approach, fully stabilizing one deep learning application before beginning the next, rather than attempting a broad AI transformation all at once. This approach respects your team's bandwidth and reduces the risk of implementation fatigue.

Three Mistakes Mid-Market Companies Make When Adopting Deep Learning

The first and most damaging mistake is treating deep learning as a technology project rather than a business initiative. Companies that assign ownership to IT without strong involvement from the business function that will use the outputs consistently underperform. The operations leader, the sales director, or the supply chain manager must co-own the initiative alongside the technical team.

The second mistake is underinvesting in data preparation. Mid-market companies typically need to spend 30 to 40 percent of their total project budget on data cleaning, normalization, and pipeline construction. Companies that skip this step and jump straight to model training end up with models that perform beautifully on test data and fail in production because the real-world data flowing into them is inconsistent, incomplete, or incorrectly formatted.

The third mistake is choosing a vendor based on technical impressiveness rather than practical fit. A vendor who shows you a stunning demo built on a perfectly curated dataset is not necessarily the right partner for your messy, real-world data environment. Ask to see deployments at companies with similar data maturity, similar team sizes, and similar industry complexity to yours.

Challenges Specific to the Mid-Market Deep Learning Journey

Mid-market companies face a set of challenges that neither small businesses nor large enterprises encounter. The most persistent is the talent gap. You need people who understand deep learning well enough to manage vendor relationships, evaluate model performance, and make informed decisions about scaling, but you cannot justify hiring a full-time data science team of five to ten specialists. The practical solution is developing one or two internal "AI literate" leaders, typically from your existing analytics or engineering team, and supplementing their capabilities with an external partner.

Data fragmentation presents another significant hurdle. Most mid-market companies have data spread across six to twelve different systems, each with its own schema, update frequency, and access protocols. Building the data pipelines needed for deep learning often requires connecting systems that were never designed to talk to each other. This integration work is not glamorous, but it accounts for the majority of the effort and cost in most mid-market deep learning projects.

Budget timing creates friction as well. Deep learning projects require upfront investment that produces returns over 12 to 24 months, but many mid-market companies operate on annual budget cycles with limited capacity for multi-year commitments. Companies that treat the first year as a learning investment and plan for returns in year two consistently outperform those that demand immediate ROI and abandon projects prematurely when the first quarter does not deliver dramatic results.

Finally, change management at mid-market scale has its own character. Your teams are close-knit, processes have been refined through years of experience, and the people doing the work often know their domain better than any algorithm initially will. Resistance is not irrational. It is the natural response of skilled professionals being asked to trust a system they did not build and do not fully understand. Successful mid-market deep learning adoption requires investing as much in training, communication, and gradual rollout as in the technology itself.

The Future Competitive Landscape: What Happens Between 2026 and 2030

The next three to five years will create a decisive split among mid-market companies in virtually every industry. Companies that begin serious deep learning adoption in 2026 will have compounding advantages by 2028 and 2029 that latecomers cannot easily replicate. These advantages are not just about efficiency gains. They are about data accumulation.

Every month a deep learning model operates inside your business, it learns from your specific data environment, your customer behaviors, your operational patterns. A company that starts in 2026 will have three years of proprietary model refinement by 2029. A competitor that starts in 2029 will have a generic, freshly deployed model that needs years of operation to reach the same level of accuracy and usefulness. This compounding data advantage is the real strategic value of early adoption, and it is particularly powerful at mid-market scale where operational nuances are harder to replicate than at the enterprise level.

The mid-market deep learning adoption guide is not just about what to do today. It is about positioning your company for a future where AI-augmented operations are the baseline expectation from customers, partners, and investors. By 2029, investors evaluating mid-market companies for acquisition or growth funding will treat AI maturity as a standard due diligence criterion, much as they treat cybersecurity maturity today. Companies without meaningful AI capabilities will face lower valuations and fewer strategic options.

Conclusion

Three points stand out from everything covered in this guide. First, deep learning services have reached a maturity and price point that makes them genuinely practical for mid-market companies, not as a scaled-down version of enterprise AI, but as a distinct category of implementation designed for your budget, your team structure, and your operational reality. Second, the applications with the highest returns at mid-market scale are not the flashiest ones in the headlines. They are operational forecasting, document automation, customer modeling, and quality control, all of which solve problems you are already spending significant money and time on today. Third, the compounding nature of AI advantage means that the window for early-mover benefit is finite. Companies that begin in 2026 will have a meaningful and hard-to-replicate advantage over those that start in 2028 or 2029.

KriraAI works with mid-market companies across industries to design and deploy deep learning solutions that are built for real-world constraints, not laboratory conditions. Their approach starts with understanding your data environment, your team capacity, and your business objectives before recommending any technology, ensuring that every dollar of your AI investment generates measurable returns at the scale you actually operate. If you are ready to explore what deep learning can do for a company like yours, reaching out to the KriraAI team for an initial readiness conversation is a practical and low-commitment first step.

FAQs

Human annotation will not disappear but will undergo a fundamental role transformation over the next three to five years. Rather than producing training examples at scale, human annotators will shift toward three higher-leverage activities: calibrating and auditing verification systems to ensure they maintain alignment with human quality standards, producing small quantities of gold-standard examples that serve as anchors for distribution monitoring and verifier calibration, and designing the specifications and constraints that guide synthetic generation in new domains. The total volume of human annotation will decrease dramatically, potentially by 80 to 90 percent for frontier model training, but the skill requirements and impact per annotation will increase correspondingly. Organizations should plan for smaller, more expert annotation teams focused on verification oversight rather than large-scale data production.

The most reliable model collapse prevention techniques currently supported by both theoretical analysis and empirical evidence combine three complementary strategies. First, maintaining a reservoir of verified real-world data that is mixed into every training iteration at a ratio of at least 10 to 20 percent prevents the complete loss of distributional grounding that causes catastrophic collapse. Second, using high-temperature sampling with nucleus sampling parameters tuned to preserve tail distributions during generation maintains output diversity across iterations. Third, monitoring distributional divergence metrics (particularly Vendi score and kernel-based maximum mean discrepancy) across generation cycles provides early warning of mode dropping, allowing intervention before collapse becomes irreversible. The combination of these three approaches has been shown to sustain stable self-training for at least 10 to 15 iterations in controlled experiments, and ongoing research is extending these bounds through more sophisticated diversity-promoting objectives and adaptive mixing strategies.

Based on current research implementations and scaling projections, a fully closed-loop synthetic data pipeline will require approximately 40 to 60 percent additional total compute compared to an equivalent training run on a static dataset. This overhead breaks down into roughly 15 to 25 percent for data generation (inference on the generator model), 15 to 30 percent for multi-stage verification (including formal checking, empirical validation, and learned quality estimation), and 5 to 10 percent for curriculum optimization and distribution monitoring. However, this comparison is misleading in isolation because the training efficiency gains from higher-quality, better-targeted synthetic data mean that the model achieves equivalent or superior capability with fewer total gradient steps. The net effect in current experiments is that closed-loop systems reach a given capability threshold with comparable or lower total compute than static-data systems, while achieving higher asymptotic capability when total compute is held constant.

The domains where fully closed-loop synthetic data generation will arrive last are those where verification requires either irreducible human judgment or expensive real-world experimentation that cannot be simulated. Creative writing quality assessment, cultural appropriateness evaluation, nuanced ethical reasoning, and tasks requiring genuine common sense about rare real-world situations all resist automated verification because there is no formal specification of correctness and no simulation environment that captures the relevant complexity. Medical and legal domains face an additional challenge: verification errors in these domains carry high real-world consequences, creating a much lower tolerance for verification pipeline failures than in domains like code or mathematics. These domains will likely maintain significant human involvement in the verification loop through at least 2030, though the human role will increasingly shift from direct annotation to oversight and audit of semi-automated verification systems.

Engineering teams should begin preparation in three concrete areas. First, instrument existing training pipelines with comprehensive data provenance tracking, recording the source, generation method, and quality assessment metadata for every training example. This metadata infrastructure is prerequisite for any closed-loop system and is independently valuable for debugging and reproducibility. Second, build or acquire multi-stage verification capabilities for your primary training domains, starting with the most automatable aspects (format compliance, factual consistency checking, execution-based validation) and progressively adding more sophisticated verification layers. Third, design your compute infrastructure for heterogeneous workloads that include generation inference, verification processing, and training in flexible proportions, rather than optimizing exclusively for training throughput. Teams that build these capabilities incrementally over the next 12 to 18 months will be positioned to adopt closed-loop methodologies as they mature, while teams that wait for turnkey solutions will face a significant capability gap.

Ridham Chovatiya is the COO at KriraAI, driving operational excellence and scalable AI solutions. He specialises in building high-performance teams and delivering impactful, customer-centric technology strategies.