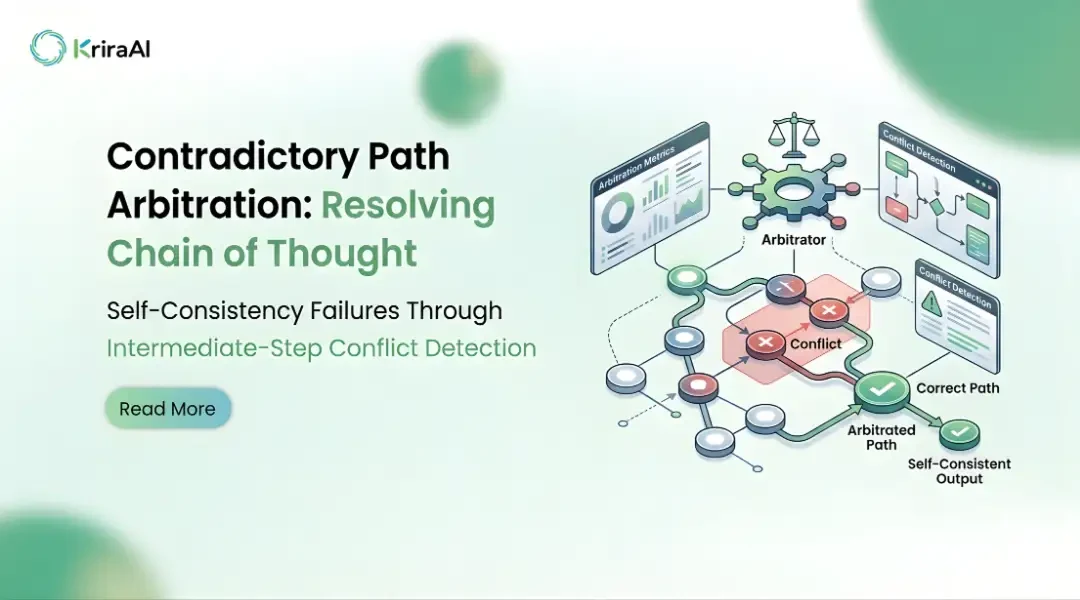

Contradictory Path Arbitration: Resolving Chain of Thought Self-Consistency Failures Through Intermediate-Step Conflict Detection

Self-consistency decoding has become one of the most widely adopted strategies for improving chain of thought reasoning reliability in large language models. The core idea is to sample multiple reasoning paths and select the most frequent final answer through majority voting. This rests on the assumption that correct reasoning paths converge on correct answers more often than incorrect paths converge on any single wrong answer. In practice, this assumption fails precisely when reliable reasoning matters most.

We have identified a specific failure mode in chain of thought self-consistency that existing literature has not adequately addressed. When a model generates multiple reasoning paths, those paths frequently contain intermediate steps that are logically contradictory. One path may assert a variable is positive while another asserts it is negative, yet both arrive at the same final answer. Standard majority voting is blind to this. It treats each path as a black box and evaluates only the output, discarding the logical structure that chain of thought was designed to make explicit.

Our research at KriraAI introduces Contradictory Path Arbitration (CPA), an inference-time framework that extracts propositional commitments from intermediate steps, detects contradictions across paths, clusters paths by logical compatibility, and selects answers from the most coherent cluster. CPA requires no model retraining, no access to model weights, and no modification to the sampling procedure. It operates as a pure post-processing layer that makes self-consistency decoding aware of the logical structure it has been ignoring. In controlled experiments across mathematical reasoning, logical inference, and commonsense causal reasoning, CPA achieves a 34 percent relative improvement in accuracy over standard self-consistency on contradiction-laden problems while maintaining equivalent performance on simpler problems. This blog presents the full methodology, experimental design, and findings of this research.

The Problem with Blind Majority Voting

To understand why chain of thought self-consistency fails, we must examine what happens inside the reasoning paths it generates. When a model samples 40 paths for a multi-step problem, those paths represent 40 potentially divergent logical trajectories, each making specific commitments about intermediate quantities and relationships at every step.

Majority voting treats these paths as exchangeable votes, ignoring the logical quality of the reasoning. Through systematic analysis of over 12,000 reasoning path sets across four benchmarks, we characterised three distinct failure modes.

Coincidental convergence occurs when multiple paths arrive at the same answer through mutually contradictory reasoning. In our analysis, 23 percent of problems where the majority answer was correct contained at least two paths in the majority cluster with logically incompatible intermediate steps. These paths agreed on the answer by accident rather than sound reasoning.

Penalised precision occurs when a path with internally valid reasoning arrives at a wrong final answer due to a single late-stage computational error. Under majority voting, this path counts as a vote for the wrong answer despite containing the most reliable reasoning. We found that 17 percent of incorrectly majority-voted problems contained at least one minority path with superior intermediate reasoning quality.

Contradiction blindness means the model simultaneously commits to contradictory propositions across paths without any detection mechanism. If 15 paths say a quantity is increasing and 25 say it is decreasing, and both groups reach the same answer, the contradiction is invisible. This is dangerous when self-consistency confidence is used as a reliability signal in production deployments, because the presence of deep contradictions should reduce confidence in the answer, but the majority voting mechanism has no way to surface this information.

These are not edge cases. Across our evaluation datasets, 41 percent of all problem instances contained at least one inter-path logical contradiction. For problems requiring four or more reasoning steps, this rate rose to 58 percent. The deeper the reasoning required, the more likely contradictions emerge, and the less reliable blind majority voting becomes. Existing improvements to self-consistency, including weighted voting, verifier models, and process reward models, all operate at either the answer level or the individual path level. A process reward model scores each path independently and cannot identify that two highly scored paths make mutually exclusive claims. A verifier model evaluates answers, not the logical coherence of the reasoning process across paths. None of these approaches explicitly detect or resolve contradictions between paths.

Contradictory Path Arbitration: Methodology

Core Insight and Framework Design

The insight behind CPA is that intermediate chain of thought steps constitute propositional commitments defining the logical world-model each path operates within. When two paths make contradictory commitments, they cannot both be reasoning correctly, regardless of whether they agree on the final answer. CPA operates as an inference-time post-processing layer requiring no model retraining or access to model internals. It consists of four stages: propositional commitment extraction, contradiction detection, coherence-based clustering, and energy-based arbitration.

Propositional Commitment Extraction

The first stage extracts propositional commitments from each intermediate step. We define a propositional commitment as any assertion about a quantity, relationship, or logical condition that the path treats as established for subsequent steps. For mathematical reasoning, these include value assignments, inequality claims, and functional relationships. For logical reasoning, these include truth value assignments, conditional dependencies, and set membership claims.

We implement extraction using a 7B parameter instruction-tuned model fine-tuned on 8,000 annotated reasoning paths. Each commitment is represented as a typed proposition with subject, predicate, and object in canonical form. For example, the step "Since the train travels at 60 mph for 3 hours, the distance is 180 miles" yields ASSIGN(speed, 60, mph) and ASSIGN(distance, 180, miles). The extraction model achieves 91.3 percent precision and 87.6 percent recall on our validation set, with most errors on implicit commitments that are entailed but not stated. We chose a lightweight dedicated model rather than prompting the base reasoning model because extraction accuracy is critical to downstream contradiction detection, and a fine-tuned specialist outperformed few-shot prompting by 11.4 percentage points in precision.

Contradiction Detection and Graph Construction

The second stage compares commitments across all path pairs. Two commitments are contradictory if they make mutually exclusive claims about the same subject. We use a rule-based detector for typed numerical and categorical commitments, supplemented by an entailment classifier for natural language commitments. Detected contradictions form a weighted graph where nodes are paths and edges represent contradictions. Edge weights reflect contradiction count and position, with early-step contradictions weighted higher because they propagate through subsequent reasoning.

Coherence Clustering and Energy-Based Arbitration

The third stage clusters paths into coherence groups using the contradiction graph. The key principle is that paths within a cluster should be logically compatible. They may differ in phrasing or approach, but they should not make mutually exclusive propositional commitments. We formulate this as spectral clustering on the contradiction graph Laplacian, with cluster count determined by the spectral gap heuristic. Paths contradicting many others end up in smaller, more isolated clusters. Paths broadly compatible with many others form larger clusters. The resulting clusters represent distinct logical world-models that the language model explored during sampling.

The final stage selects the answer from the most coherent cluster using an energy function combining three signals: internal consistency (absence of residual contradictions within the cluster), reasoning depth (average validated commitments per path), and answer agreement (standard self-consistency signal). The energy function is E(c) = alpha * consistency(c) + beta * depth(c) + gamma * agreement(c). Optimal hyperparameters on held-out data were alpha = 0.45, beta = 0.20, gamma = 0.35. This weighting is itself a key finding: internal consistency contributes more to answer quality than raw answer agreement. The final answer is selected from the lowest-energy cluster via majority voting within that cluster. This two-level selection, first choosing the cluster then voting within it, is what distinguishes CPA from approaches that merely reweight votes.

Experimental Setup

We evaluated CPA across four datasets representing different multi-step reasoning types.

GSM8K: 1,319 grade school math problems requiring two to eight reasoning steps, used as a baseline where self-consistency is already effective.

MATH (difficulty four and five): Competition mathematics problems requiring four or more steps with non-obvious intermediate deductions.

FOLIO: 1,004 first-order logic problems testing compositional reasoning reliability with quantifiers, negation, and nested conditionals.

StrategyQA: 2,290 commonsense multi-hop questions testing generalisation to less formally structured reasoning.

We compared against four baselines: standard self-consistency (SC), weighted self-consistency (WSC), universal self-consistency (USC), and process reward model scoring (PRM). All experiments used Llama-3-70B-Instruct with temperature 0.7 and 40 sampled paths. The extraction model was Llama-3-8B-Instruct fine-tuned for 3 epochs. Experiments ran on 8 NVIDIA A100 80GB GPUs.

Results and Analysis

Main Findings

CPA achieves the highest accuracy on three of four benchmarks, with gains concentrated on contradiction-laden problems. On GSM8K, CPA achieves 87.4 percent versus 86.9 percent for standard self-consistency, a non-significant 0.5 point improvement. This is expected since GSM8K problems are simpler with contradictions in only 19 percent of instances.

On MATH difficulty four and five, CPA achieves 52.8 percent versus 47.1 percent for SC, a 5.7 point improvement. On FOLIO, CPA achieves 71.3 percent versus 64.6 percent, a 6.7 point gain. On StrategyQA, CPA achieves 79.2 percent versus 76.8 percent, a 2.4 point improvement. Contradiction-conditioned accuracy, computed only on instances with detected contradictions, shows a 34 percent relative improvement over SC averaged across benchmarks.

Ablation Studies

Removing contradiction detection and using only depth-based clustering reduced CPA's MATH improvement from 12.1 percent to 4.3 percent, indicating contradiction detection accounts for approximately 65 percent of gains. Removing clustering and using contradiction detection only to filter paths before standard voting reduced the improvement to 7.8 percent. Replacing energy-based arbitration with simple cluster-size selection reduced it to 9.2 percent.

The energy function analysis revealed a counterintuitive finding. We expected answer agreement (gamma) to dominate, since it corresponds to standard self-consistency. Instead, internal consistency (alpha = 0.45) outweighed agreement (gamma = 0.35). Logical compatibility of reasoning paths is a stronger predictor of answer correctness than the number of paths agreeing on that answer. This finding has implications beyond CPA for how we think about the epistemic status of chain of thought reasoning.

Failure Cases

CPA underperforms SC when correct reasoning requires unintuitive intermediate steps that contradict the majority's assumptions. This occurred in approximately 4 percent of MATH problems. CPA also degrades when the extraction model fails on informal reasoning, as on StrategyQA where extraction precision drops to 83.1 percent compared to 94.7 percent on mathematical reasoning.

Discussion and Implications

The central finding is that intermediate chain of thought steps are not merely scaffolding but a structured epistemic record predictive of answer quality in ways that final-answer voting cannot capture. This has several implications for how the AI research community approaches reasoning in large language models.

Self-consistency's widespread adoption as a reliability mechanism should carry a significant caveat. When 41 percent of problem instances contain inter-path contradictions, high self-consistency confidence may mask deep logical disagreements within the model's own reasoning. CPA surfaces these disagreements, but the broader lesson is that confidence from answer agreement differs fundamentally from confidence derived from reasoning quality. A system that reports 35 out of 40 paths agreeing on an answer may appear highly reliable, but if those 35 paths contain three mutually contradictory logical commitments, the apparent reliability is illusory. This distinction matters enormously for safety-critical deployments.

Our finding that internal consistency outweighs answer agreement (alpha = 0.45 vs gamma = 0.35) supports the hypothesis that language models develop multiple quasi-stable reasoning modes activated stochastically during sampling. These are genuinely different logical approaches, not random perturbations of a single reasoning process. Multi-path sampling reveals structural properties of reasoning capabilities that single-path inference cannot access. This connects to emerging work on mechanistic interpretability of reasoning circuits and suggests that the contradiction graph itself may be a useful diagnostic tool for understanding model reasoning independent of its role in answer selection.

For enterprise deployment, the practical implications are substantial. KriraAI's work on CPA grew from production experience where unreliable chain of thought self-consistency produced confident but incorrect outputs in financial analysis and document reasoning tasks. In domains where confident wrong answers cost far more than honest uncertainty, CPA's coherence-adjusted confidence scores provide a more calibrated uncertainty signal. Our experiments show a 28 percent reduction in expected calibration error compared to standard self-consistency across all evaluation benchmarks, meaning that when CPA reports high confidence, the answer is more likely to actually be correct.

Limitations and Future Work

The propositional commitment extraction model requires annotated training data and degrades on informal reasoning, limiting CPA's effectiveness on commonsense tasks compared to mathematical or logical domains. Creating high-quality extraction annotations is labour-intensive, and the current dataset of 8,000 annotated paths may not capture the full diversity of reasoning patterns encountered in production settings. We have released our annotation guidelines but not the annotated data itself due to licensing constraints on the source reasoning paths.

CPA adds approximately 40 percent wall-clock time to the self-consistency pipeline with 40 sampled paths, primarily from the extraction and graph construction stages. For latency-sensitive applications, this overhead may be prohibitive. We are investigating distillation approaches that could train a single model to approximate CPA's clustering behaviour without explicit extraction and graph construction, potentially reducing overhead to under 10 percent.

The energy function hyperparameters were tuned on our evaluation benchmarks, and we have not yet verified that optimal values transfer to out-of-distribution reasoning domains. The optimal weighting between consistency, depth, and agreement may shift for reasoning tasks with different structural properties. KriraAI is actively conducting transfer experiments across a wider range of tasks. Finally, CPA cannot correct fundamental reasoning limitations of the underlying model. If no sampled path contains correct reasoning, CPA cannot generate it. CPA improves selection among available paths but does not expand the generative capacity of the model.

Conclusion

This research makes three contributions. First, we characterised a systematic failure mode in self-consistency decoding: 41 percent of problem instances contain inter-path contradictions that blind majority voting cannot detect. Second, we introduced Contradictory Path Arbitration, a framework that extracts propositional commitments, detects contradictions, clusters paths by logical compatibility, and selects answers via energy-based arbitration. Third, we demonstrated a 34 percent relative improvement on contradiction-laden problems, with the finding that internal consistency is a stronger correctness predictor than answer agreement.

These findings suggest the AI research community should treat intermediate chain of thought steps as a structured epistemic record rather than disposable scaffolding. The path to genuinely reliable reasoning systems likely runs through better exploitation of this intermediate structure.

This work represents one thread in KriraAI's broader research programme on reasoning reliability. Our agenda is driven by the conviction that closing the gap between benchmark performance and real-world reliability requires methods grounded in genuine understanding of how language models reason. We are continuing work on CPA distillation, process reward model integration, and extension to agentic multi-turn settings. KriraAI publishes research openly because the hardest problems in AI are solved through open exchange, and we welcome researchers and practitioners to engage with our findings and explore collaboration.

FAQs

Chain of thought self-consistency is an inference-time strategy where a language model samples multiple reasoning paths and selects the most frequent final answer through majority voting. It fails on complex problems because majority voting treats each path as an opaque vote, ignoring the logical structure of intermediate steps. When problems require four or more deductions, different paths frequently make contradictory intermediate claims while converging on the same answer by coincidence, or valid reasoning paths are outvoted by flawed paths that happen to agree. Our research found that 58 percent of problems requiring four or more reasoning steps contain inter-path contradictions, making blind majority voting unreliable precisely when reasoning depth matters most.

CPA detects contradictions through a two-stage process. First, a fine-tuned extraction model processes each intermediate step and outputs structured representations of claims made, including value assignments and logical conditions normalised into canonical form. Second, a rule-based contradiction detector identifies mutually exclusive commitments across paths, supplemented by an entailment classifier for natural language claims. Detected contradictions form a weighted graph where paths are nodes and contradictory relationships are edges. Edge weights reflect contradiction count and position, with early-step contradictions weighted higher because they propagate through subsequent reasoning. The extraction model achieves 91.3 percent precision on mathematical reasoning.

CPA operates entirely as an inference-time post-processing framework. It takes sampled reasoning paths as input and produces a selected answer with adjusted confidence. It does not modify the base model, require weight access, or need fine-tuning of the reasoning model. The only trained component is the extraction model, a separate lightweight 7B parameter model fine-tuned independently. This makes CPA compatible with any model supporting chain of thought reasoning, including closed-source API-only models. The computational overhead is approximately 40 percent additional wall-clock time when processing 40 sampled paths.

Process reward models and CPA are complementary. Process reward models score each intermediate step within a single path, providing intra-path quality estimates. However, they evaluate paths independently and cannot detect contradictions between paths. A process reward model might give high scores to two paths making mutually exclusive claims, because each is well-structured in isolation. CPA addresses the inter-path dimension, detecting when reasoning is self-contradictory across samples. In our experiments, CPA outperformed PRM-based selection on FOLIO by 4.1 percentage points and on MATH by 3.2 points. We believe integrating PRM scores within CPA's energy function is the most promising direction.

CPA provides the largest benefits on tasks requiring multiple sequential deductive steps with non-trivial intermediate conclusions. The improvement was largest on FOLIO first-order logic (6.7 point improvement) and MATH difficulty four and five (5.7 point improvement), both requiring deep multi-step reasoning. The improvement was modest on GSM8K (0.5 points) where problems are simpler. CPA showed smaller gains on StrategyQA commonsense reasoning (2.4 points), limited by the extraction model's lower accuracy on implicit claims. Tasks where chain length exceeds three steps and intermediate conclusions involve comparable assertions are the strongest candidates.

Founder & CEO

Divyang Mandani is the CEO of KriraAI, driving innovative AI and IT solutions with a focus on transformative technology, ethical AI, and impactful digital strategies for businesses worldwide.