AI in Textile Manufacturing: How KriraAI Raised Defect Detection to 97%

In a 140,000-spindle textile manufacturing operation running 312 looms across three shifts, every minute of production generates roughly 38 linear metres of woven fabric. At that throughput, a single defect missed during inspection does not stay a single defect for long — it propagates through downstream dyeing, finishing, and cutting until it surfaces as a customer return, a rejected shipment, or a penalty deduction from a global apparel brand that will not tolerate anything below AQL 1.5. The client in this engagement — a leading textile manufacturing enterprise operating multiple high-speed weaving and processing facilities across western India — was catching approximately 62% of fabric defects through manual visual inspection. That meant nearly four out of every ten defective metres were reaching customers. The annual cost of this gap, measured in returns, rework, waste, and brand penalties, exceeded 18 crore rupees. When the company's quality leadership reached KriraAI, the question was not whether AI could help — it was whether a computer vision system could operate at the speed, resolution, and accuracy required to inspect fabric on a production line running at 22 metres per minute without slowing it down. This blog covers the full story — the problem in operational detail, the AI in textile manufacturing platform KriraAI built, the architecture behind it, and the measured results the client achieved within six months of going live.

The Problem KriraAI Was Called In To Solve

The client operated a vertically integrated textile facility producing shirting, suiting, and dress-weight fabrics for domestic and export markets. Their production floor ran 312 high-speed rapier and air-jet looms feeding into a continuous dyeing and finishing line. Fabric inspection happened at two points — a grey fabric station after weaving and a final station after finishing. Both were entirely manual, staffed by trained inspectors using the four-point grading system.

The Limits of Human Inspection at Industrial Speed

Each inspector examined fabric moving at 15 to 22 metres per minute, giving less than 0.3 seconds of visual exposure per linear metre. The client's internal audit confirmed a 62% average detection rate, dropping below 54% during the final two hours of each eight-hour shift. The defects being missed were not trivial — broken picks, missing ends, oil stains, reed marks, and selvedge distortions were passing through to the cutting table. Some defects only became visible after dyeing, by which point the fabric had consumed dye chemicals, finishing agents, and machine time. The cost of catching a defect at the loom was 12 rupees per metre. The same defect caught after finishing cost 87 rupees per metre. A defect reaching a customer cost over 340 rupees per metre when factoring in return logistics and penalty deductions.

Data That Existed but Was Never Connected

The client's looms logged stop events, pick density variations, and tension anomalies through electronic let-off systems. The finishing line recorded temperature, speed, and chemical concentration. The ERP system held order, lot, and customer quality data. None of these streams were connected to the inspection process. A loom recording seventeen stop events during a beam run was producing fabric with statistically higher defect density, but the inspector examining that fabric had no way of knowing this. The information existed in silos, never correlated, never used to predict where defects would appear.

Competitive Pressure from Global Buyers

Two major European buyers had begun mandating AI-assisted inspection certificates as a procurement prerequisite for their 2025 sourcing cycle. A third buyer in the US market had communicated similar intentions for 2026. The client faced a hard deadline: implement a credible AI quality control textiles capability within twelve months or risk losing contracts representing 28% of export revenue.

What KriraAI Built: A Real-Time Fabric Intelligence Platform

KriraAI designed and delivered FabricMind — a real-time computer vision fabric inspection system integrated with loom telemetry and the client's MES and ERP infrastructure. The system was not a standalone camera add-on or a pilot-stage proof of concept. It was a production-hardened platform engineered to operate continuously across three shifts, seven days a week, inspecting every linear metre of fabric the client's looms produced. The system performs three functions simultaneously: automated defect detection at line speed, defect classification and severity grading across 23 categories, and predictive defect correlation with upstream loom behaviour.

The Computer Vision Core

The defect detection engine uses a ResNet-50 backbone with a feature pyramid network and deformable convolutional layers adapted for woven fabric. Standard convolutions assume rigid geometric features, but fabric defects manifest as irregular deformations within a repetitive weave pattern. Deformable convolutions let the network adaptively adjust its receptive field to capture elongated defects like reed marks spanning twenty or more warp threads. KriraAI trained the model using supervised fine-tuning on 186,000 annotated fabric images across fourteen constructions. Annotation involved three certified inspectors over eight weeks, with inter-annotator agreement enforced above 91%. Inference runs on edge-deployed NVIDIA T4 GPUs with TensorRT optimisation delivering 47ms per frame at 4096 by 2048 resolution, providing complete coverage at full line speed.

Loom Telemetry Correlation and Operational Integration

The second intelligence layer correlates defects with upstream loom telemetry. KriraAI built a temporal feature engineering pipeline extracting stop events, weft insertion failures, and tension deviations, mapping them to fabric positions using pick-count synchronisation. A gradient-boosted ensemble model predicts defect probability based on recent loom behaviour, flagging high-risk zones before fabric reaches inspection. FabricMind outputs integrate into the client's Wonderware MES via REST APIs and into SAP ERP via OData connectors. Every detected defect generates a georeferenced defect map — a digital twin of each roll — that travels to the cutting room, enabling defect-aware pattern layouts in the Gerber cutting system.

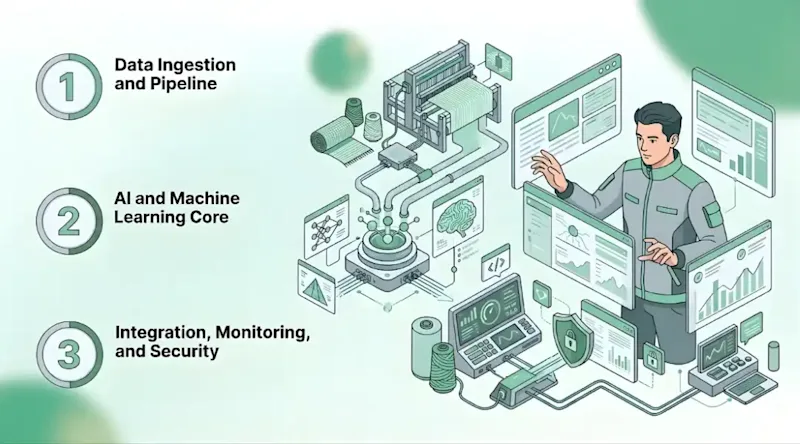

Solution Architecture for AI in Textile Manufacturing

Data Ingestion and Pipeline

The ingestion layer handles three data streams at different velocities. Line-scan camera imagery flows at 120 frames per second through a GigE Vision interface into an edge buffer managed by a custom C++ frame acquisition service that performs debayering, white balance normalisation, and tiling before forwarding to the GPU via shared memory, eliminating network serialisation. Loom telemetry arrives via OPC-UA connectors from each PLC, collected by an Apache Kafka cluster on the factory network. Schema normalisation and temporal alignment run as Apache Flink stream processing jobs computing rolling statistical features — mean tension over 500 picks, stop-event frequency, weft failure rate — published as enriched streams. ERP data is extracted via batch CDC from SAP HANA using Debezium connectors into a PostgreSQL staging layer for order and quality context joins.

AI and Machine Learning Core

Real-time inference runs the ResNet-50 FPN model on NVIDIA T4 GPUs using TensorRT with INT8 quantisation at consistent sub-50ms latency. Training used a two-phase curriculum — first on synthetic defects generated by KriraAI's proprietary augmentation pipeline applying procedural patterns via elastic deformations and texture blending, then fine-tuned on real imagery with focal loss for class imbalance. The offline loom-correlation model runs centrally, consuming enriched telemetry from Kafka and pushing defect probability scores to edge stations as risk maps. Retraining runs weekly via Kubeflow Pipelines on an on-premise GPU server incorporating new validated annotations.

Integration, Monitoring, and Security

The integration layer uses RabbitMQ as the message broker connecting edge nodes to three consumer services — MES dashboard integration, SAP QM lot disposition via OData with automated AQL grading, and cutting-room defect map generation. Infrastructure monitoring uses Prometheus with custom exporters for GPU utilisation, inference queue depth, and camera frame drop rate, visualised in Grafana. Model performance monitoring runs as a Flink job comparing predictions against human validations. A population stability index monitor on input feature distributions detects camera drift, with alerts when PSI exceeds 0.15. Latency is tracked at p50, p95, and p99 with automated batch-size scaling if p99 breaches 60ms.

The platform runs on the client's private factory network with no public endpoints. TLS 1.3 encrypts all data in transit over a dedicated VLAN. Role-based access control enforces four permission tiers with attribute-level data masking. Audit logs for every prediction, override, and disposition decision write to an immutable append-only PostgreSQL table with 730-day retention, satisfying ISO 9001 and OEKO-TEX traceability requirements. The primary interface is a React dashboard on the factory intranet with real-time defect overlays, shift-level analytics, and a mobile tablet view at each station enabling operator review and override — every override feeds back into retraining.

Technology Stack: Engineering Rationale Behind Every Choice

NVIDIA T4 with TensorRT was chosen because the 50ms latency budget at 120 FPS made cloud inference infeasible. Edge GPU deployment was the only viable path for deep learning defect detection at line speed.

Apache Kafka was selected for loom telemetry over MQTT because PLC event data required exactly-once delivery semantics and consumer group management that Kafka handles natively.

Apache Flink was chosen over Spark Structured Streaming because temporal feature engineering on loom data required event-time processing with custom watermarks for out-of-order PLC timestamps.

RabbitMQ was selected for integration because the fan-out to three consumer services matched its exchange-queue model more naturally than Kafka for this low-throughput, high-reliability workload.

PostgreSQL was chosen over a time-series database because defect records are relational — each references a roll, loom, order, and customer — with join-heavy analytical query patterns.

Kubeflow Pipelines ran on-premise because the client's data governance policy prohibited production fabric imagery from leaving the factory network.

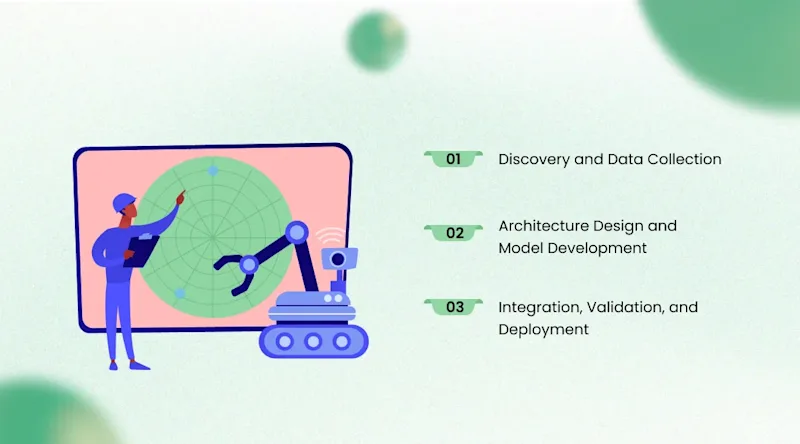

How We Delivered It: The AI Implementation Textile Industry Journey

Discovery and Data Collection (Weeks 1 through 6)

KriraAI embedded a three-person team at the client's primary facility for the first six weeks. We installed four high-resolution line-scan cameras and began capturing imagery alongside manual inspection. We discovered that overhead fluorescent lighting produced up to 23% luminance variation across fabric width — sufficient to cause false positives in early model prototypes. KriraAI specified and installed LED line-light units with diffused optics reducing variation below 3%, a hardware prerequisite no software normalisation could replace. The annotation campaign ran in parallel, with three inspectors labelling 186,000 patches. We encountered 14% disagreement on defect boundaries and resolved it through a majority-vote adjudication protocol.

Architecture Design and Model Development (Weeks 7 through 16)

The critical challenge was achieving accuracy on dark fabrics. Initial precision on navy and black suiting was 71% versus 94% on lighter constructions. KriraAI added CLAHE contrast enhancement with adaptive tile sizing tuned per colour group and augmented training data with synthetic defects on dark backgrounds. After retraining, dark-fabric precision reached 89% and continued improving with production data.

Integration, Validation, and Deployment (Weeks 17 through 26)

SAP QM integration proved more complex than scoped — the client's custom inspection lots required a BAPI wrapper developed jointly with their SAP consultants, consuming five weeks of engineering effort. The lot disposition logic alone required mapping FabricMind's 23 defect categories and four severity levels into the client's custom SAP characteristic matrix, which varied by customer and product group. Validation ran four weeks in shadow mode alongside human inspectors, with every model prediction compared against the human grade. The model achieved 97.4% detection on 8,200 pre-marked samples with 1.6% false positive rate. Deployment rolled out in three phases — the primary grey fabric station first, then two additional grey stations, then the final finishing-line inspection — across all stations over three weeks.

Results the Client Achieved

Within six months of full deployment, the FabricMind platform delivered outcomes exceeding original targets. The results were measured against the same production volume, customer base, and product mix as the prior year to ensure a clean comparison.

Defect detection rate increased from 62% to 97.4% — the computer vision fabric inspection system caught defects human inspectors consistently missed, particularly those below 2mm width.

False positive rate held at 1.6% — inspector override rates dropped below 4% within three months.

Fabric waste reduced by 41% — earlier detection and defect-aware cutting maps salvaged fabric previously downgraded or discarded.

Customer returns dropped by 63% measured year-over-year against the same customer base.

Annual quality losses reduced by an estimated 11.2 crore rupees from lower returns, rework, waste, and eliminated penalty deductions.

Inspection throughput increased by 35% — line speed raised from 18 to 24 metres per minute because the AI maintained accuracy at higher speeds.

Two major European buyers accepted the AI-assisted inspection reports as equivalent to third-party certification, removing the procurement barrier threatening 28% of export revenue. The system achieved full payback within five months of deployment.

What This Architecture Makes Possible Next

FabricMind was designed to expand beyond initial deployment. Adding new inspection stations requires deploying additional camera-GPU edge units that connect to existing Kafka and RabbitMQ infrastructure without central platform changes. The defect taxonomy is extensible — adding a class requires approximately 3,000 annotated examples and a fine-tuning cycle completing in under six hours.

[Icon Point Image Title: The Future of AI Quality in Textiles 01: Multi-Stage Inline Inspection 02: Autonomous Loom Parameter Tuning 03: Predictive Yield Optimisation 04: Customer-Specific Grading Profiles]

The client's two-year roadmap includes three extensions the architecture supports directly. First, finishing-stage inspection cameras — the ResNet-50 FPN generalises to finished fabric with transfer learning on a finishing-specific dataset, and the edge inference hardware is identical to the existing stations, making deployment a configuration exercise rather than a re-architecture. Second, closing the loop from defect detection to loom parameter correction via OPC-UA write commands, using the correlation model's identification of which operational parameters drive specific defect types. Third, customer-specific grading profiles applying different AQL thresholds per buyer drawn from SAP order data, enabling automated lot disposition without manual quality review for standard production orders. Other textile manufacturers can apply the same architecture pattern — edge computer vision with centralised telemetry correlation — to their own facilities, adapting the defect taxonomy and loom integration to their specific equipment and product range.

Conclusion

Three insights from this engagement define what matters most. Technically, the decision to use deformable convolutional layers was the most important architectural choice — fabric defects are geometrically irregular within a regular weave, and adaptive receptive fields captured defects that rigid filters missed entirely. Operationally, the greatest impact came from connecting loom telemetry to inspection in a way no manual process could, shifting quality management from reactive grading to predictive intervention. Strategically, the AI in textile manufacturing capability became a commercial asset — AI-assisted inspection certification directly influenced buyer procurement decisions and protected export revenue.

KriraAI brings this same combination of deep technical engineering and real-world delivery discipline to every engagement. From the factory floor lighting audit in week one to the SAP BAPI integration in week twenty, every phase reflected the rigour of a team that builds and deploys production AI systems under real industrial constraints. If your manufacturing operation is leaving quality intelligence on the table because your data is fragmented and your inspection is manual, bring that challenge to KriraAI.

FAQs

AI is used for quality control in textile manufacturing primarily through computer vision systems that inspect fabric in real time on production lines. In the platform KriraAI built, high-resolution cameras capture fabric at 120 frames per second, and a deep learning defect detection model classifies defects into 23 categories with a 97.4% detection rate. This replaces manual inspection that typically captures only 55% to 70% of defects. AI quality control textiles systems also integrate with loom telemetry to correlate defects with upstream machine behaviour, enabling predictive quality management rather than reactive grading.

Computer vision fabric inspection uses camera systems and trained neural networks to automatically detect, classify, and locate defects during production. The system captures high-resolution images, processes them through a model that recognises defect patterns against the weave structure, and outputs defect locations and severity grades in real time. In the KriraAI deployment, the deep learning defect detection model was trained on 186,000 annotated images covering fourteen constructions and uses TensorRT-optimised edge inference to achieve sub-50ms processing, enabling inspection above 22 metres per minute without production slowdown.

The reduction depends on the baseline being replaced and the defect types present. In this engagement, AI in textile manufacturing raised detection from 62% to 97.4%, meaning defects reaching downstream processes dropped from 38% to approximately 2.6%. This translated to a 63% reduction in customer returns and 41% reduction in waste. The greatest improvement was on low-contrast defects in dark fabrics and defects below 2mm width, where human inspectors had historically struggled most due to speed and lighting constraints of manual inspection at production-line velocities.

The ROI varies by facility size and the cost structure of quality failures. In this engagement, the platform reduced annual quality losses by 11.2 crore rupees encompassing returns, rework, waste, and buyer penalties. Full payback was achieved within five months. Inspection throughput also increased by 35% because the AI maintained accuracy at higher line speeds, increasing effective station capacity without additional capital investment or staffing. Two export buyers accepted AI inspection reports as certification equivalents, protecting revenue streams that represented 28% of the client's export business.

The AI implementation textile industry timeline depends on data readiness, integration complexity, and fabric construction coverage. KriraAI completed this engagement in 26 weeks from kickoff to full deployment. Data collection and annotation consumed six weeks — foundational because model accuracy depends directly on annotation quality. Model development and architecture build took ten weeks. Integration, validation, and phased rollout required the final ten weeks, with SAP QM integration being most time-intensive. Manufacturers should plan six to eight months for production-grade deployment across multiple constructions.

Founder & CEO

Divyang Mandani is the CEO of KriraAI, driving innovative AI and IT solutions with a focus on transformative technology, ethical AI, and impactful digital strategies for businesses worldwide.