AI in Financial Compliance: How KriraAI Cut False Alerts by 60%

Every month, the transaction monitoring system at one of India's leading private sector banks generated over 12,000 alerts flagging potentially suspicious activity across retail, corporate, and trade finance channels. Of those 12,000 alerts, approximately 94% turned out to be false positives after manual investigation. That meant a team of 85 compliance analysts was spending the vast majority of its time reviewing transactions that posed no actual risk, while genuine suspicious patterns slipped through because investigators were overwhelmed by noise. The cost of this inefficiency was staggering. The bank estimated that each false positive alert consumed an average of 2.4 analyst hours to investigate, close, and document. Multiplied across 11,280 false alerts per month, the bank was burning over 27,000 analyst hours monthly on work that produced no regulatory value and no risk reduction. When the bank's Chief Compliance Officer approached KriraAI, the mandate was not to build a dashboard or generate a report. The mandate was to fundamentally restructure how AI in financial compliance could replace a brittle, rule based transaction monitoring system with an intelligent platform that could distinguish genuine risk from benign anomalies at scale. This blog covers the complete story of that transformation, including the operational problem in full detail, the AI platform KriraAI designed and delivered, the technical architecture powering it, the implementation challenges we encountered and resolved, and the measurable results the bank achieved within seven months of going live.

The Problem KriraAI Was Called In To Solve

The bank's existing transaction monitoring infrastructure was built on a commercially licensed rules engine that had been deployed eight years earlier. Over those eight years, the compliance team had accumulated over 1,400 detection rules, each designed to flag specific transaction patterns that might indicate money laundering, terrorist financing, or sanctions evasion. The rules operated on simple threshold logic: if a customer's transaction volume exceeded a certain amount within a defined window, or if funds moved through a jurisdiction on a watchlist, or if the transaction type matched a known typology pattern, an alert was generated.

Why Rules Alone Could Not Keep Up

The fundamental limitation of this approach was that each rule operated in isolation, evaluating a single dimension of behaviour against a static threshold. Real money laundering schemes rarely trigger one rule cleanly. They involve layered transactions across multiple accounts, structured deposits designed to stay below reporting thresholds, rapid movement of funds through shell entities, and the deliberate use of legitimate business activity as cover. The rules engine had no ability to evaluate the relationships between entities, no mechanism to learn from investigator decisions over time, and no capacity to adapt its thresholds based on changing customer behaviour or emerging typologies.

The result was a system that generated enormous volumes of alerts for routine business activity while failing to detect sophisticated laundering schemes that operated below or between the static thresholds. The bank's internal audit had flagged three instances in the prior eighteen months where suspicious activity was identified by external regulators before the bank's own monitoring system had raised an alert. Each of these regulatory findings carried reputational risk, potential enforcement action, and the implicit message that the bank's compliance infrastructure was not keeping pace with the complexity of financial crime.

The Human Cost of False Positive Overload

Beyond the detection gap, the false positive burden was degrading the quality of investigations that analysts could perform on genuine alerts. With 94% of their caseload turning out to be noise, investigators developed pattern blindness, treating every alert as likely false until proven otherwise. Investigation narratives became formulaic. The average time spent on alerts that were ultimately filed as Suspicious Transaction Reports had decreased by 22% over two years, not because the process became more efficient, but because analysts were rushing through genuine cases to keep up with volume. The compliance leadership recognised that the problem was not a staffing shortage. Hiring more analysts to review more false positives would simply scale the inefficiency. The problem was architectural. The detection system itself needed to become intelligent enough to separate signal from noise before alerts reached human investigators.

What KriraAI Built: An Intelligent Transaction Risk Platform

KriraAI designed and delivered a platform called ComplianceGraph, an AI powered transaction monitoring and investigation assistance system that replaced the bank's static rules engine with a multi model architecture capable of evaluating transaction risk across behavioural, relational, and temporal dimensions simultaneously.

Graph Based Entity Risk Scoring

The core innovation of ComplianceGraph is its use of graph neural networks to model the relationships between entities, accounts, and transactions as a dynamic knowledge graph. Rather than evaluating each transaction in isolation, the system constructs a continuously updated graph where nodes represent customers, accounts, counterparties, and beneficial owners, while edges represent transaction flows, shared addresses, common directors, and other relational signals. KriraAI trained a GraphSAGE based model using inductive learning on the bank's historical transaction network, enabling the model to generate risk embeddings for new or previously unseen entities without requiring full graph retraining. The graph based approach captures network level risk signals, such as funds flowing through clusters of recently incorporated entities with shared directorships, that no individual rule could detect.

Temporal Sequence Analysis

Alongside the graph layer, KriraAI deployed a temporal convolutional network that analyses the sequence of transactions for each account over rolling 90 day windows. This model learns the normal behavioural signature for each customer segment and flags deviations that indicate structuring, layering, or sudden changes in transaction velocity. The temporal model was trained using a contrastive learning approach, where pairs of genuine suspicious sequences drawn from confirmed SAR filings and benign sequences were used to train the model to maximise the distance between their embeddings in the latent space.

Automated Investigation Narrative Generation

The third component is a retrieval augmented generation pipeline that assists investigators by automatically generating draft investigation narratives for alerts that the AI scoring layer escalates. KriraAI fine tuned a large language model on a corpus of 14,000 historical investigation reports authored by the bank's own analysts, ensuring that the generated narratives matched the bank's internal style, regulatory terminology, and documentation standards. The RAG pipeline retrieves relevant customer profile data, transaction history, prior alert history, and applicable regulatory guidance from a vector store indexed using HNSW, and feeds this context into the language model to produce structured, citation grounded narratives that investigators can review, edit, and approve rather than drafting from scratch.

Solution Architecture for AI in Financial Compliance

Data Ingestion and Pipeline

The data pipeline ingests transaction data from the bank's core banking system through change data capture using Debezium connectors on the underlying Oracle database, streaming every insert and update into an Apache Kafka cluster in near real time. Customer master data, account metadata, and KYC records are extracted via batch CDC on a six hour cycle from the bank's CRM and onboarding systems. Sanctions and PEP watchlist data is pulled daily from four external providers via scheduled REST API calls. All ingested data flows through Apache Flink stream processing jobs that perform schema normalisation, entity resolution across the bank's multiple customer identification systems, and temporal feature engineering, computing rolling aggregates such as 7 day, 30 day, and 90 day transaction volumes, counterparty diversity indices, and jurisdiction risk scores. The enriched data lands in a feature store built on Feast, serving both an offline path for model training via Parquet files in S3 and an online path backed by Redis for sub 10 millisecond feature retrieval during real time inference.

AI and Machine Learning Core

The ML core operates three models in an ensemble scoring architecture. The GraphSAGE model runs inference on the entity relationship graph stored in Neo4j, updated incrementally as new transactions arrive via Kafka consumers. The temporal convolutional network processes per account transaction sequences with inference served through ONNX Runtime for consistent sub 30 millisecond latency. An XGBoost gradient boosted ensemble model sits atop both neural outputs, combining graph risk embeddings, temporal anomaly scores, and 47 handcrafted features from the feature store into a final risk probability that determines whether an alert is generated, suppressed, or routed for enhanced review. Model training runs on a dedicated GPU cluster provisioned on AWS using g5.2xlarge instances, orchestrated by Kubeflow Pipelines on Amazon EKS with experiment tracking managed through MLflow. The RAG pipeline for narrative generation runs a fine tuned Mistral 7B model served via vLLM with continuous batching, connected to a Qdrant vector store containing embedded investigation reports and regulatory guidance indexed using HNSW with 128 dimensional embeddings generated by a fine tuned E5 encoder.

Integration Layer

KriraAI designed the integration layer as an event driven architecture with Kafka as the backbone. When the ensemble model generates an alert, it publishes a structured event to a dedicated Kafka topic. Three consumer services subscribe to this topic. The case management service writes the alert into the bank's Actimize system via its REST API with versioned contracts in OpenAPI 3.1. The regulatory reporting service prepares STR filing packages when investigators confirm suspicious activity, formatting output according to FIU India's prescribed XML schema. The audit trail service writes every model prediction, feature vector, risk score, and investigator action to an immutable append only audit log in Amazon S3 with object lock, ensuring complete reproducibility for regulatory examination.

Monitoring and Observability

Infrastructure metrics including container utilisation, Kafka consumer lag, and inference latency at p50, p95, and p99 are collected by Prometheus and visualised in Grafana. Model performance monitoring runs as a Flink job comparing predictions against investigation outcomes. KriraAI implemented population stability index monitoring on all 47 input features, with automated Slack alerts when PSI exceeds 0.2. A KL divergence monitor tracks the output score distribution weekly against the training distribution. Automated retraining triggers when ensemble precision on confirmed suspicious cases drops below 78% over a rolling 30 day window.

Security and Compliance

The entire platform runs within the bank's private VPC on AWS with no public endpoints. All data in transit is encrypted using TLS 1.3, and all data at rest uses AWS KMS with customer managed keys. Role based access control with four permission tiers enforces attribute level data masking so that customer PII is visible only to authorised investigation roles. The platform complies with RBI's Master Direction on KYC, PMLA requirements, and FIU India's reporting standards. No customer data leaves the private VPC at any point.

User Interface and Delivery

The primary interface is a React based dashboard served through the bank's internal gateway, presenting a prioritised alert queue sorted by AI risk score. Each alert card displays entity relationship graphs, temporal behaviour timelines, ensemble risk breakdowns, and AI generated investigation narratives. Investigators can accept, modify, or reject narratives, and every interaction feeds into the training dataset. A management view provides aggregate compliance metrics, false positive trends, and SLA tracking.

Technology Stack: Why Each Choice Was Made

Every technology was selected based on compatibility with the bank's AWS infrastructure, real time latency requirements, and information security policies prohibiting data egress.

Apache Kafka on Amazon MSK was chosen over Kinesis because the bank required topic level partitioning by business line with exactly once delivery semantics.

Apache Flink was selected over Spark Structured Streaming because entity resolution and temporal feature engineering required event time windowing with late arrival handling that Flink's watermark semantics manage more reliably.

Neo4j was chosen over Amazon Neptune because Cypher provided more expressive traversal patterns for ad hoc investigation queries, and Neo4j's APOC library offered native graph algorithm support for community detection.

vLLM was chosen for LLM serving because its continuous batching and PagedAttention memory management delivered 3.2x throughput improvement over standard HuggingFace inference.

Qdrant was selected over Pinecone for the vector store because it could be deployed as a self managed instance within the private VPC, satisfying the bank's zero data egress requirement.

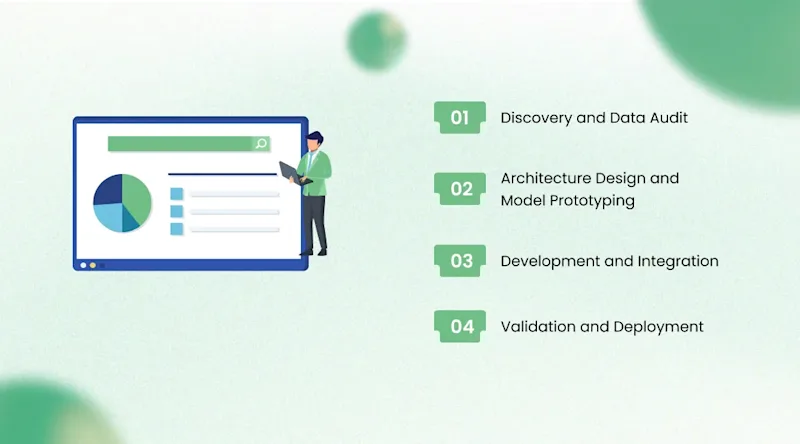

How We Delivered It: The AI Implementation Financial Services Journey

Discovery and Data Audit (Weeks 1 through 5)

KriraAI embedded a four person team at the bank's compliance operations centre. The objective was understanding the true state of the data, not the state described in documentation. We discovered that core banking transaction records had inconsistent counterparty identification across retail and corporate channels. The same entity could appear under three different identifiers depending on whether the transaction originated through branch, internet banking, or SWIFT. This gap meant models trained on raw data would undercount relationship signals by approximately 18%. KriraAI built a probabilistic entity resolution pipeline using fuzzy name matching, address normalisation, and shared attribute clustering that resolved 94% of duplicates with 97.2% precision.

Architecture Design and Model Prototyping (Weeks 6 through 12)

Architecture design ran in parallel with model prototyping on curated clean data. The most significant finding was that GraphSAGE performance varied dramatically by graph construction methodology. With edges defined solely by direct transaction flows, the model achieved an AUC of 0.71. When KriraAI enriched the graph with shared attribute edges (common registered addresses, shared directors, linked phone numbers), AUC improved to 0.89. This enrichment required additional KYC system integration beyond the original scope, but the performance gain justified it.

Development and Integration (Weeks 13 through 24)

Development deployed ten engineers across ML, data, backend, and frontend workstreams. The most challenging integration was with Actimize. The bank's instance was heavily customised with proprietary alert schemas. KriraAI reverse engineered the custom schema and built a translation layer that consumed six weeks of engineering effort. The RAG pipeline required careful prompt engineering to prevent narrative fabrication. KriraAI implemented a citation verification step that cross references every claim against source data in the vector store, flagging unsupported statements before presentation to investigators.

Validation and Deployment (Weeks 25 through 32)

Validation used twelve months of held out data containing 847 confirmed suspicious cases and over 130,000 false positive closures. The model achieved 60% false positive reduction while maintaining 98.3% true positive detection. Deployment followed a phased rollout across retail, corporate, and trade finance over four weeks, with each phase running ten days in shadow mode alongside the existing rules engine.

Results the Bank Achieved

Within seven months of full deployment, ComplianceGraph delivered measurable outcomes that transformed compliance operations.

False positive rate reduced from 94% to 37.6%, a 60% reduction in alert noise reaching investigators.

Average investigation time reduced by 67%, from 2.4 hours to 47 minutes per alert, driven by AI prioritisation and auto generated narratives.

STR filing preparation time reduced by 74%, from 6.2 hours to 1.6 hours per filing.

42 previously undetected suspicious patterns identified through graph based network analysis in the first six months.

Monthly analyst capacity effectively tripled, absorbing a 30% transaction volume increase without additional hiring.

Regulatory examination findings dropped to zero in the first post deployment audit, compared to three findings in the prior eighteen months.

The bank's MLRO confirmed payback within eight months. Annualised savings exceeded 9.4 crore rupees from reduced investigation costs, faster STR processing, and avoided regulatory penalties.

What This Architecture Makes Possible Next

ComplianceGraph was designed to extend beyond AML transaction monitoring. The graph neural network infrastructure and entity resolution pipeline support multiple compliance and risk use cases without architectural changes.

The bank's two year roadmap includes three extensions. First, integrating sanctions screening into the graph layer so sanctions hits are evaluated in context of the entity's full relationship network rather than as isolated name matches. Second, extending the temporal sequence model to detect fraud patterns across card, UPI, and NEFT channels using the same behavioural analysis framework. Third, deploying a unified customer risk scoring model combining AML, fraud, sanctions, and credit risk into a single enterprise profile powered by the same feature store and graph infrastructure. Each extension adds a new model and consumer service to the Kafka backbone without modifying the core platform. For other institutions evaluating machine learning AML detection, the architecture pattern here, combining graph neural networks finance teams can trust with temporal analysis and RAG assisted investigation, offers a replicable blueprint for modernising compliance operations.

Conclusion

Three insights from this engagement stand out. Technically, modelling entity relationships as a dynamic graph rather than evaluating transactions in isolation was the most impactful architectural choice, because money laundering is fundamentally a network phenomenon that transaction level analysis cannot capture. Operationally, the greatest transformation was the shift from a team overwhelmed by noise to one focused on genuine investigative work, with AI handling triage, prioritisation, and narrative drafting so human expertise applied where it matters most. Strategically, the AI in financial compliance capability became a regulatory differentiator, moving the bank from receiving examination findings to demonstrating infrastructure regulators cited as a sector benchmark.

KriraAI brings this same engineering rigour, from data pipeline architecture through model training through production MLOps, to every engagement. We build systems that handle the full complexity of enterprise environments where data is messy, integrations are customised, regulations are specific, and production readiness is defined by auditors, not demo day presentations. If your institution operates a compliance function that generates more noise than insight, bring that challenge to KriraAI and let us build the system that changes the ratio.

FAQs

AI improves AML transaction monitoring by replacing static, threshold based rules with machine learning models that evaluate risk across multiple dimensions simultaneously. In the system KriraAI built, a graph neural network analyses entity relationships to detect network level laundering patterns, a temporal convolutional network identifies behavioural anomalies, and an ensemble model combines both with engineered features into a calibrated risk score. This AI transaction monitoring approach reduced false positives by 60% while maintaining 98.3% detection on confirmed suspicious activity, because AI learns the difference between genuinely suspicious patterns and routine business behaviour triggering static rules.

The return on investment from AI in financial compliance depends on alert volume, false positive rates, and staffing costs. In this engagement, the bank spent over 27,000 analyst hours monthly investigating false positives. After ComplianceGraph deployed, the false positive rate dropped from 94% to 37.6% and investigation time fell by 67%. Annualised savings exceeded 9.4 crore rupees within eight months. Beyond cost savings, the platform eliminated regulatory findings, reduced reputational risk, and enabled the team to absorb 30% more transaction volume without hiring.

AI can dramatically reduce false positives by learning to distinguish genuinely suspicious patterns from benign activity that superficially resembles suspicious behaviour. Traditional rules engines evaluate single attributes against fixed thresholds without context. The AI transaction monitoring platform KriraAI built reduced false positives by 60% because it evaluates each transaction within the entity's full relationship network, historical patterns, and 47 engineered features. The 42 previously undetected suspicious patterns identified in the first six months demonstrate that reducing noise does not sacrifice detection sensitivity.

Modern AI powered AML systems combine graph neural networks for entity relationship analysis, temporal models for behavioural detection, ensemble models for risk scoring, and large language models for investigation narrative generation. In ComplianceGraph, GraphSAGE handled inductive learning on transaction networks, a temporal convolutional network used contrastive learning on confirmed suspicious sequences, XGBoost combined neural outputs with handcrafted features, and a fine tuned Mistral 7B served via vLLM generated narratives through RAG. This combination of graph neural networks finance institutions need with temporal and generative AI represents the current state of the art in machine learning AML detection.

The AI implementation financial services timeline depends on data readiness, integration complexity, and regulatory validation. KriraAI completed this project in 32 weeks. Data audit and entity resolution consumed five weeks, foundational because counterparty inconsistencies would have degraded performance by 18%. Architecture and prototyping took seven weeks. Development and integration required twelve weeks, with Actimize integration most intensive. Validation and phased deployment took eight weeks including shadow mode. Financial institutions should plan seven to nine months for production grade compliance AI deployment.

Founder & CEO

Divyang Mandani is the CEO of KriraAI, driving innovative AI and IT solutions with a focus on transformative technology, ethical AI, and impactful digital strategies for businesses worldwide.